Searching for the Higgs Boson with the ATLAS detector at CERN

Summary. This report summarises the particle physics project the author performed which aimed at searching for evidence of the Higgs boson using large quantities of Monte Carlo simulated data and real data from the ATLAS experiment at CERN.

The search potential of a Standard Model Higgs boson in the Vector Boson Fusion production mechanism with Higgs boson decaying to two leptons and two neutrinos via decay to two Z bosons with the ATLAS detector is investigated. The ATLAS detector is a general purpose detector in operation at CERN measuring proton-proton collisions produced by the Large Hadron Collider. This channel has been shown to have high sensitivity at large Higgs mass, where large amounts of missing energy in the signal provide good discrimination over expected backgrounds. This work takes a first look at whether the sensitivity of this channel may be improved using the remnants of the vector boson fusion process to provide extra discrimination, particularly at lower mass where sensitivity of the main analysis is reduced because of lower missing energy.

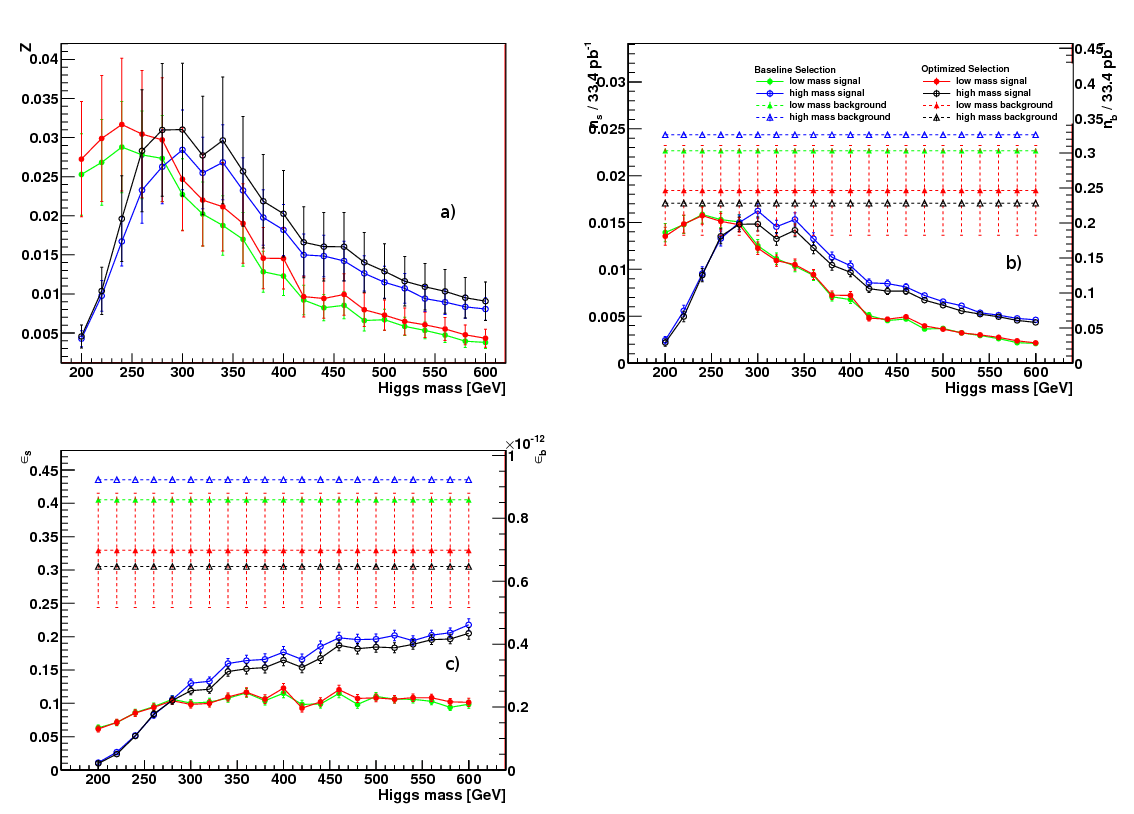

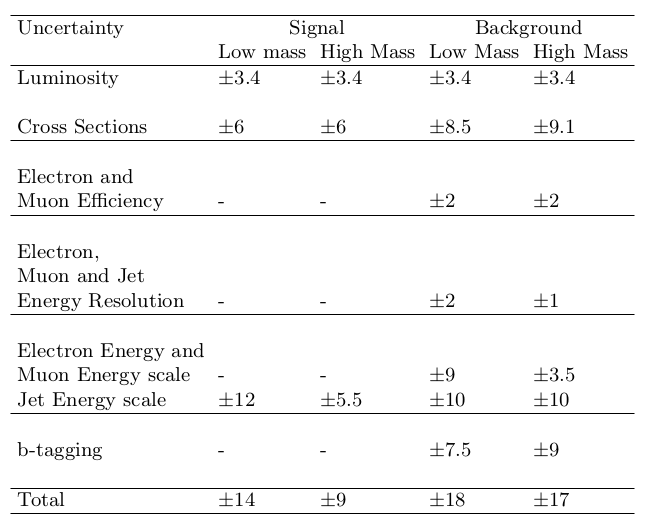

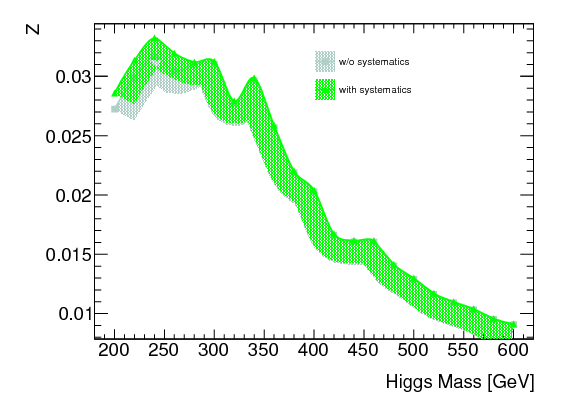

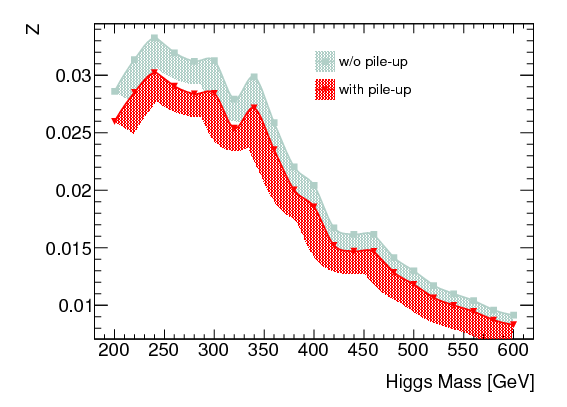

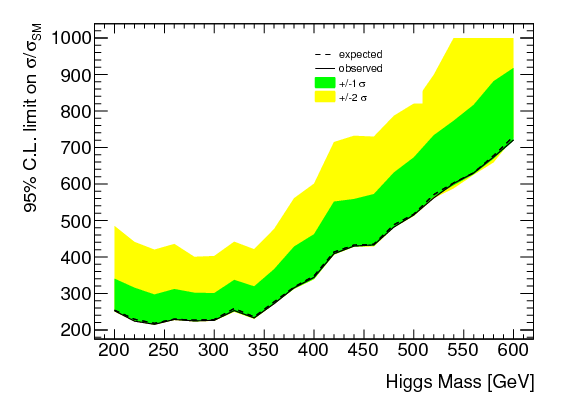

Simulated data samples at centre of mass energy 7 TeV are used to derive signal significances over the mass range between 200-600 \( \Gcs \). Because of varying signal properties with mass, a low and a high mass event selection were developed and optimized. A comparison between simulated and real data (collected in 2010) is made of variables used in the analysis and the effect of pileup levels corresponding to those in the 2010 data is investigated. Possible methods to estimate some of the main backgrounds to this search are described and discussed. The impact of important theoretical and detector related systematics are taken into account. Final results are presented in the form of 95 \( \% \) Confidence Level exclusion limits on the signal cross section relative to the SM prediction as a function of Higgs boson mass, based on an integrated luminosity of 33.4 \( \pb \) of data collected during 2010.

Skills used:

- Data cleaning (see the section Physics Objects) (cross checking of simulated (Monte Carlo) and real data, and where neccessary making modifications to Monte Carlo (i.e. accounting for detector effects and pile-up)).

- Feature Selection (See the sections Feature Selection Signal and Background Processes) (development of two cut based selections as a function of Higgs mass in order to extract Higgs signal and from its backgrounds- based on physics and cross checking distributions).

- Big Data Analysis (see the section Signal and Background Processes for description of some data samples used) (analysis of multiple \( \approx \) TB's size datasets using the CERN world wide computing Grid, batch analysis with qsub).

- Optimization (see the section Optimization of Feature Selection cuts) (of feature selection yielding approximately 10 \( \% \) improvement in signal sensitivity relative to baseline selection.

- Algorithm development (Individually wrote all code used in the analysis phases of the study, including multidimensional optimization code).

- Statistics (see the section Results in form of Limits) (hypothesis testing).

- Data Visualisation and documentation (workflow, analysis and presentation of results- CERN ROOT, \( \LaTeX \) ).

- Linux (study was entirely performed on linux computers, most of the time accessed remotely through terminal).

- End to end analysis (in depth study with real aims and outcomes).

- Data migration (analysis was done using various formats at different stages- Monte Carlo and data from data sets containing all information from detector was reduced in size by recording desired information- allowing for more efficient analysis).

- Programming (C++, Python, make).

- Mathematics.

- Version control (SVN).

Motivation

The Higgs boson was predicted theoretically to exist by Peter Higgs [1] and others [2] [3] in 1964. It forms an intricate part of our framework for understanding elementary particles and their interactions. Arguably it was the main motivation that prompted the construction of the LHC and associated experiments, CMS and ATLAS, who purpose it would be to find experimental evidence for the existence of such a particle. The CMS and ATLAS detectors are in essense gigantic microscopes which allow, through colliding very high speed (energy) protons (provided by the LHC) together in their centre, researchers to investigate the fundamental consistuents of matter and the forces between them. The energies at which the protons collide at the LHC were and remain at the highest energies anywhere in the world, allowing access to the 'small scale' at which evidence for the Higgs boson could be found experimentally. In essence, when large numbers of high energy protons are collided together, because in part of their non-fundamental nature (they themselves are made of constitent parts), the end product is a very messy mix of particles, with a huge number of different possible outcomes depending on the physics properties of the individual collisions. Producing a Higgs boson has a very small chance of happening theoretically, compared to the mutlitude of other more probable processes. The mathematics behind the theory of the Higgs boson and of elementary particle theory predicts the Higgs boson can only be detected by its decay products or what it 'turns into'. Indeed there are numerous theoretically predicted so called decay channels for the Higgs boson. In addition there are a number of methods by which it can be produced. Therefore using the ATLAS detector at CERN to search for the Higgs boson is very much like the analogy of 'searching for a needle in a haystack'. In essence the problem can be defined as using vast amounts of data to find statistical evidence for a signal (the Higgs boson) amoungst the much more numerous background of particles produced by the much more numerous other processes occuring at a much higher rate.As is still currently the case, our theoretical understanding of all these processes is modelled using the Monte Carlo simulation, which not only simulates each physical process resulting from the proton-proton collisions, right the way to the final particles 'seen' in the detector, it also has the ability to take into account the modelling of these particles as they travel through the detector. By comparing this theoretical representation (in all the different production and decay modes) to actual data recorded by the detector and stored for later use, theoretical ideas can be tested, as was subseqeuntly done in 2012 when the Higgs boson was discovered to the 5 \( \sigma \) level (i.e. an excess of events with a statistical significance of five standard deviations above background expectations). The search the author was involved in occured prior to this, when much less data had been taken by the experiments, yet non the less important work could be done. The search focussed on a particular form of Higgs boson, predicted to be produced in a certain way (vector boson fusion), and subseqent decay (to two Z bosons), giving rise to a so called final state, detectable in the detector, of two jets, two leptons and two neutrinos. It was the first search performed within the ATLAS experiment of its kind.

Introduction

The Standard Model of Particle Physics

Elementary particles and their interactions

Our current understanding of the physical world in terms of fundamental matter particles and their interactions is largely based on the Standard Model (SM) of particle physics. It describes all known particles and three of the four known fundamental interactions (the electromagnetic, weak and strong interactions). Within the SM the particles are classified by their spin as either- half-integer spin particles called fermions obeying Fermi-Dirac statistics. These form the matter particles.

- integer spin particles called bosons. These particles obey Bose-Einstein statistics and their exchange between the fermions describes the fundamental interactions.

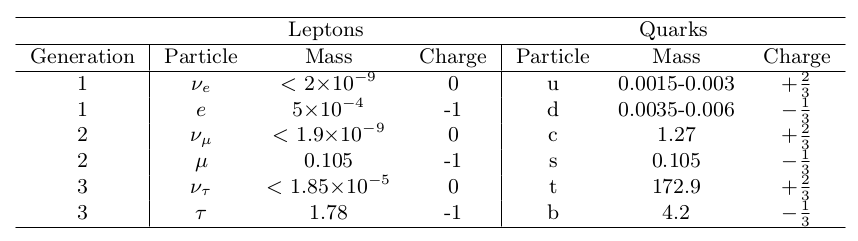

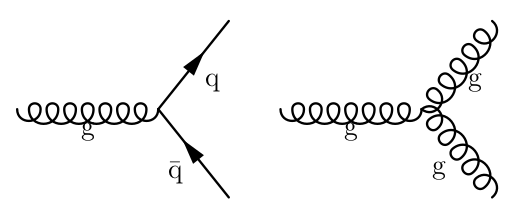

Figure 1: The fermions and some of their basic properties. Masses are given in \( \Gcs \) (the electron volt is a unit of energy, so this unit for mass comes from rearranging E=m c \( ^{2} \) ) and the electric charge in units of the electrons charge, \( |e| \) [4]

The first generation of quarks consists of the up (u) quark with + \( \frac{2}{3} \) electric charge (in units of electron charge, \( |e| \)) and the down (d) quark with -\( \frac{1}{3} \) electric charge. The other generations consist of a u-type and a d-type quark but are successively heavier than the first generation. The second generation consists of the strange (s) and charm (c) quarks while the third generation consists of the bottom (b) and top (t) quarks. Quarks carry colour charge and as such each comes in three distinct colour states (red, green or blue). Each doublet of leptons is composed of an electrically charged lepton and its corresponding neutral neutrino. As with quarks, the mass of the charged leptons in the doublet increases with generation. The first generation consists of the electron (\( e \)) and its neutrino (\( \nu_{e} \)), the second the muon (\( \mathrm{\mu} \)) and its neutrino (\( \nu_{\mu} \)) and the third the tau (\( \mathrm{\tau} \)) and its neutrino (\( \nu_{\tau} \)). Each quark and lepton have a corresponding anti-particle, denoted with a bar. Anti-particles have opposite electric charge to the corresponding particle but the same mass. In nature quarks are only found within composite hadrons, composed of either three quarks making a baryon or in quark anti-quark states called mesons.

Interactions between the fermions are mediated by the absorption and emission of integer spin particles called bosons. This gives rise to four fundamental forces, summarised in Figure 2. The electromagnetic force makes the electron bind to nuclei and more generally, molecule formation underpinning Chemistry. It is mediated by the photon ( \( \gamma \) ). The strong force is responsible for holding nuclei together and is mediated by eight massless gluons (g). The weak force explains \( \beta \) decay and is mediated by exchange of W \( ^{+/-} \) and Z bosons. Gravity is responsible for galactic formation. It is the weakest of all the forces and is negligible at the energy scales considered in particle physics. In principle, gravity can be described as being mediated by the exchange of a boson called the Graviton.

Figure 2: The mediators of the four fundamental fermion interactions and some of their basic properties. Relative strength corresponds to the typical strength of the forces compared to the strong force between two protons separated by \( \approx \) 15 \( \mathrm{fm} \) [5]. Here \( l \) refers to lepton ( \( e,\mu,\tau \) ).

The Standard Model

The SM is a theoretical framework of quantum field theory [6] in which the elementary particles are the quanta of the underlying fields and the interactions are a consequence of the principle of local gauge invariance. As yet attempts to incorporate gravity using this approach have failed. The time-line of the SM becoming a unified theory of the forces that it describes started with the development of the quantum field theory of electromagnetic interactions, called Quantum Electrodynamics. Subsequently in the 1960's a unified electroweak theory was developed unifying the electromagnetic and weak interactions. Finally the electroweak theory was unified with the theory of the strong interactions (Quantum Chromodynamics) giving what is understood as the SM today. Doing this in the conventional way led to the requirement that the bosons be massless, something which we know not to be the case from experimental observations.

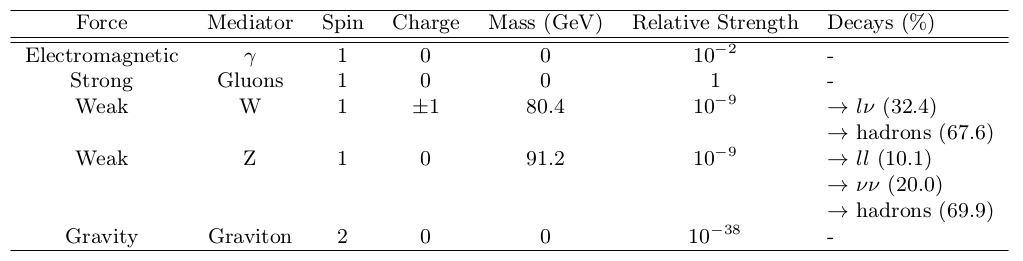

Quantum Electrodynamics

All quantum electromagnetic interactions consist of the interaction of charged fermions with the quantum of the electromagnetic field, the photon, and are encompassed within Quantum Electrodynamics (QED). The most basic form of such an interaction, is shown in Figure 3. It shows the interaction of a charged fermion f with a photon \( \gamma \). As with all interactions, the strength is characterized by a coupling constant associated to each vertex. The electromagnetic force couples to electric charge and so this defines the strength of electromagnetic interactions. This vertex corresponds to the basic building block from which all QED processes can be represented. Complete QED processes represented in this way are called Feynman diagrams. Feynman diagrams with the smallest number of vertices for a given process to occur are referred to as tree-level or leading-order whereas diagrams with a higher number of vertices are called higher order diagrams. Feynman diagrams without internal loops are referred to as tree-level or leading-order whereas diagrams with internal loops are called higher order diagrams. A detailed picture of any QED process can be obtained by summing over all possible internal states and this corresponds to summing over all Feynman diagrams of all orders.Figure 3: The basic QED vertex involving a \( \gamma \) and a charged fermion f.

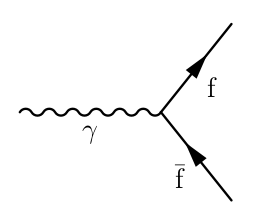

Quantum Chromodynamics

Quantum Chromodynamics (QCD) is the quantum field theory describing the strong interactions. The strong force couples to colour charge, so only the coloured gluons and quarks are involved in strong interactions. The most basic QCD interaction vertex, involving the interaction of quarks (q) with a gluon (g) is shown in leftmost figure in Figure 4.Figure 4: Basic QCD interaction vertices involving (left) gluon (g) and quark (q) anti-quark (\( \antiquark \)) and (right) gluon self interaction.

Weak and Electroweak Interactions

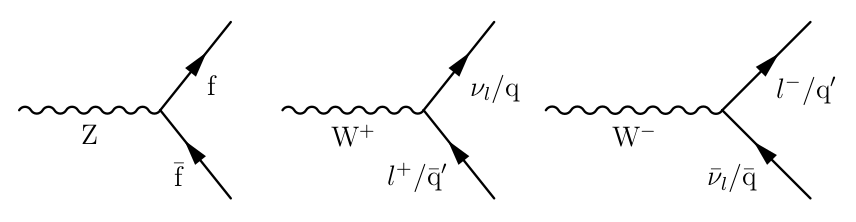

The basic allowed weak interaction vertices are shown in Figure 5.Figure 5: Weak interaction vertices allowed in the SM.

The Higgs Mechanism

The mechanism of spontaneous electroweak symmetry breaking applied to a \( non-abelian \) theory was introduced by Peter Higgs [1] and others [2] [3] in 1964 and provides a solution to the massless fields (bosons). This is what is commonly known as the Higgs mechanism. The Higgs boson mass is a free parameter in the SM and as such cannot be predicted. Despite this there exist a number of ways to constrain its mass broadly speaking coming from theoretical and experimental means. The experimental constraints on the Higgs bosons mass come from searching for its production, by colliding particles at high energy in particle colliders.

The LHC began operation in 2009 operating at \( \sqrt{s} \) = 7 \( \TeV \) and since then searches for the SM Higgs boson utilizing data have become established. As of summer 2011 the ATLAS Collaboration, using a combination of search channels each using between 1.0 to 2.3 \( \fb \) of data, produced preliminary results [7] indicating exclusion of the Higgs boson mass ranges from 146 \( \Gcs \) to 232 \( \Gcs \), 256 to 282 \( \Gcs \) and 296 to 466 \( \Gcs \) at the 95 \( \% \) C.L.. In the absence of a signal the expected Higgs boson mass exclusion ranges from 131 to 447 \( \Gcs \). The CMS Collaboration also presented similar results in summer 2011.

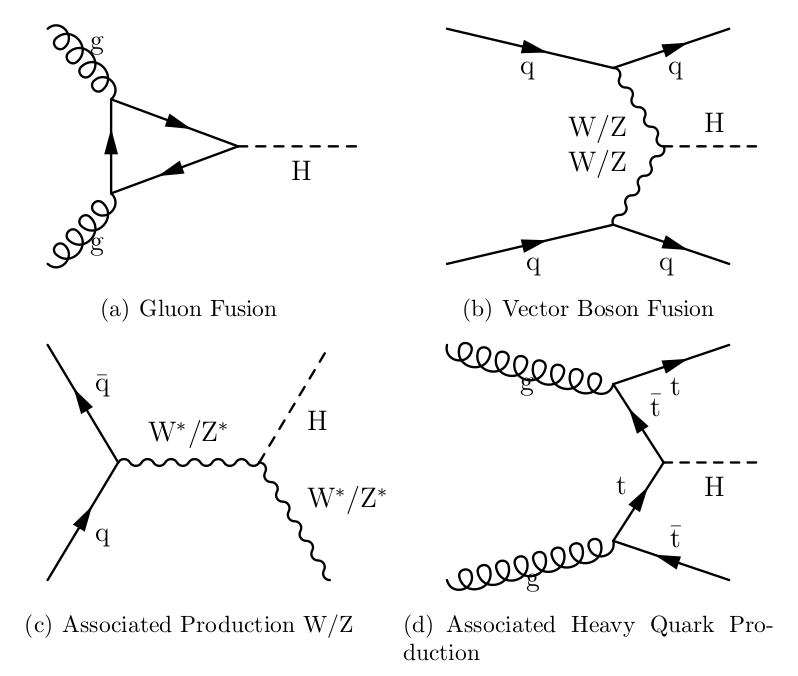

Higgs production at the LHC can be divided into four main mechanisms, gluon gluon fusion (GF) ( \( \glgl \rightarrow \) H), Vector Boson Fusion (VBF) ( \( \qq \rightarrow \qq \) H), associated production with W/Z boson ( \( \qq \rightarrow \) HW/Z) and associated production with heavy quarks ( \( \glgl/\qq \rightarrow \qq \) H). Feynman diagrams showing each of these processes are shown in Figure 6.

Figure 6: Diagrams of SM Higgs Production Modes at the LHC.

GF mediated by heavy quark loops is the dominate production mode. This is largely due to higher order QCD corrections, with next-to-leading order (NLO) effects increasing its total cross section by \( \sim \) 80-100 \( \% \) at the LHC [8].

VBF is suppressed by approximately one order of magnitude compared to GF according to the SM. However this mode of production produces a Higgs boson in association with two quarks, leading to the production of two highly energetic jets typically in the forward regions of the detector with no jet activity other than that produced from the Higgs decay products between them (because of no colour flow between the initial interacting particles). This results in a distinctive signal signature allowing for efficient suppression of backgrounds. The associated production modes have lower cross section than either GF or VBF. Nonetheless they will need to be studied in order to verify the validity of the SM prediction of Higgs production modes. In addition associated production with heavy quarks is important for measuring the properties of the Higgs boson.

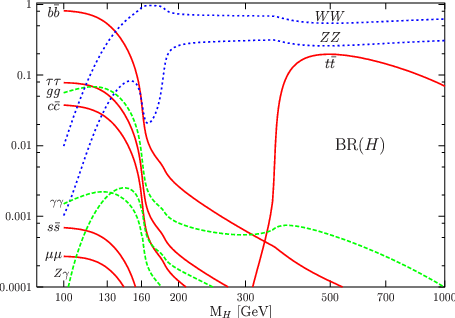

The decay of the Higgs boson according to the SM can be loosely grouped into decays to fermion and gauge boson pairs (and virtual loops). The branching ratios (BR) or relative rates of the SM Higgs boson for each decay mode, as a function of Higgs mass, are shown in Figure 7. At tree level, the coupling strength of the Higgs boson to fermions is proportional to the mass of the particles concerned. The net result is that a Higgs boson of a given mass will decay to the heaviest fermions that are kinematically accessible and as a consequence the decays of the Higgs boson can be further classified by Higgs mass.

Figure 7: Branching ratios for the SM Higgs boson as a function of its mass [9].

The Large Hadron Collider

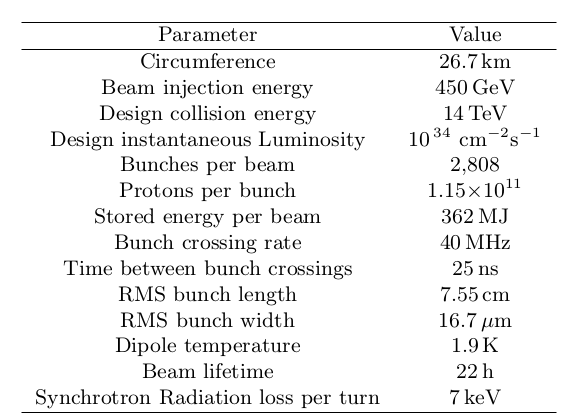

The Large Hadron Collider (LHC) is a proton-proton (pp) collider with design centre of mass energy \( \sqrt{s} \) = 14 \( \TeV \) and is the successor of the LEP collider. It has a 26.7 km circumference and is constructed approximately 100 m below ground level, on the Swiss-French border, installed in the existing tunnel used by the LEP collider. Its design characteristics are shown in Figure 8.

Figure 8: Main LHC parameters.

Being a pp collider, the LHC's maximum \( \sqrt{s} \) (like the Tevatron's) is not limited by synchrotron radiation (power emitted proportional to \( 1/m^{4} \) where \( m \) = beam particle mass) as was the case for the LEP collider. However, the production of antiprotons is highly inefficient so the LHC accelerates two counter rotating proton beams. This feature means that in contrast to \( \ppbar \) colliders such as the Tevatron where both beams can share the same beam pipe, the LHC needs individual beam pipes for each beam, with opposite bending magnetic field orientations. Constraints as to the size of the accelerator, imposed by the diameter of LEP tunnel, meant that a twin-bore dipole magnet design, first proposed by J. Blewett [10], was adopted.

Altogether there are six experiments at the LHC, four of which ATLAS (A Toroidal LHC ApparatuS) [11], CMS (Compact Muon Solenoid), LHCb (LHC beauty) and ALICE (A Large Ion Collider Experiment) are located in dedicated caverns underground one at each of the four interaction points of the LHC. The two other experiments, LHCf and TOTEM are situated approximately 100 m from the interaction points of ATLAS and CMS respectively. ATLAS and CMS are the LHC's two multi-purpose experiments and are the central tools that will be used to fulfil the LHC's main physics objectives, including making precision measurements of the SM, finding evidence for a Higgs-like boson and exploring physics beyond the SM. However the other detectors also have important roles. LHCb will study B Physics and CP violation in the quark sector using b-hadrons. ALICE will search for evidence of quark-gluon plasma during LHC lead-ion collision runs. TOTEM will perform measurements of the pp cross section at the LHC while LHCf will study physics at small angles to the beam direction.

The rate of proton-proton interactions (called the event rate, R) caused by the collision of the counter-rotating proton beams at the LHC is proportional to the instantaneous luminosity \( \mathcal{L} \) through \( \mathrm{R} = \mathcal{L}\sigma \), where \( \sigma \) corresponds to the event cross section or the probability of a particular interaction to occur (typically measured in barns). The LHC has a design luminosity of 10 \( ^{34} \Lum \). Two luminosity phases are scheduled at the LHC, a low luminosity phase at \( \approx \) 10 \( ^{33} \Lum \) prior to a high luminosity phase when it is expected the LHC will be run at its design luminosity of 10 \( ^{34} \Lum \). Based on the machine being kept operational for 200 days per year, the low and high luminosity phases will deliver approximately 10 and 100 \( \fb \) of data for the LHC experiments respectively.

Because increasing luminosity by increasing bunch crossing frequency puts high demands on experiment sub-detector electronic readout systems and trigger systems used to identify interesting processes, the high LHC luminosity will be achieved by increasing the density of protons per bunch, leading to multiple interactions per bunch crossing (called pile-up). When operated at its design luminosity, a nominal LHC beam will consist of approximately 2800 bunches spaced 25 ns apart and each containing around 10 \( ^{11} \) protons, giving an event rate of 40 MHz at each interaction point. This will lead to on average approximately 23 inelastic pp collisions per bunch crossing (pile-up events).

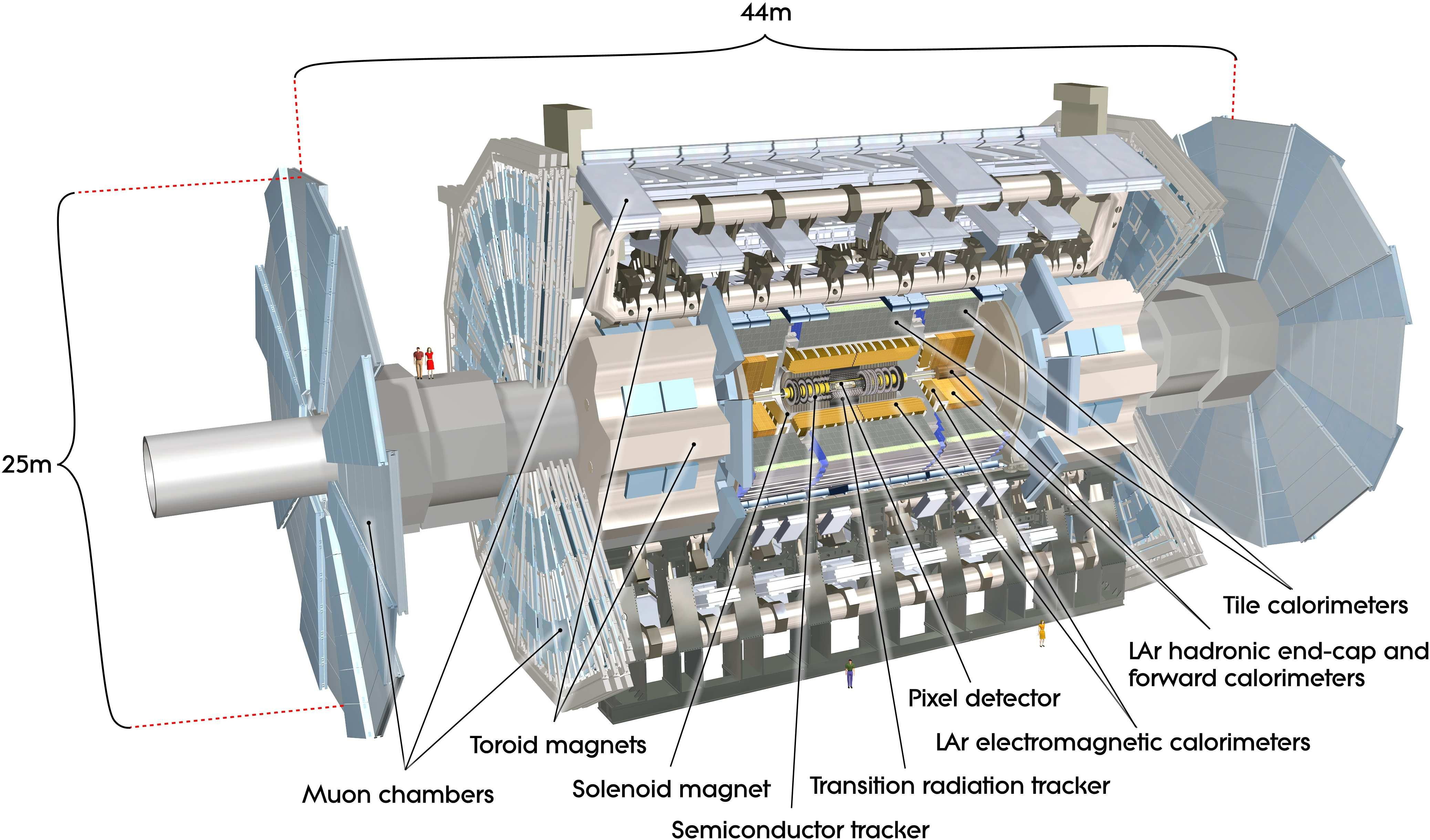

The ATLAS Detector

ATLAS [12] [13] is one of the two general purpose detectors at the LHC. It is designed to detect the remnants of the high energy collisions produced by the LHC in order to test our current theoretical understanding of particle interactions. In order to do this, the remnants of the collisions must first be reconstructed into meaningful particle representations, from which the different physics processes of interest can be identified. Particle reconstruction in this context is explored in more detail in the section Physics Objects.

Arguably the primary goal of ATLAS (and CMS) is to establish the cause of spontaneous symmetry breaking in the electroweak sector, and as such its design has been guided and optimized to search for a Higgs-type boson. However the unprecedented energy and luminosity of the LHC means that ATLAS's physics programme also includes

- Precise SM measurements. Due to their large cross sections, W and Z bosons are produced copiously at the LHC and precision measurements of their properties have helped in the commissioning of ATLAS as well as providing a consistency check of the SM. The top quark was discovered at the Tevatron in 1995 and many of its properties studied. These will further be verified at the LHC, where the top-quark production cross section is more than two orders of magnitude higher than at the Tevatron. This is further motivated because top backgrounds will be dominant for many searches/measurements at the LHC.

- Beyond the SM: Several theories predict new physics at the \( \TeV \) scale. Supersymmetry is a popular candidate. In addition, the W \( ^{'} \) and Z \( ^{'} \) bosons are examples of new particles predicted in this energy regime. Typically they decay to high \( \pt \) leptons.

Figure 9: Schematic layout of the ATLAS detector showing the major sub-detectors [11].

Nomenclature

The origin of the ATLAS co-ordinate system is defined to be the nominal interaction or collision point of the LHC's beams inside the ATLAS detector.The positive z axis is defined by the trajectory of the clockwise (viewed from above) rotating proton beam. The \( xy \) (\( r \)) plane is transverse to this with the \( x \) axis pointing toward the centre of the LHC ring and the positive \( y \) axis pointing upwards. In this plane transverse variables such as transverse momentum \( \pt = \sqrt{p_{x}^{2} + p_{y}^{2}} = p\sin(\theta) \) and transverse energy \( \et = \sqrt{E^{2} - p_{z}^{2}} \) are defined, where \( p_{x} \), \( p_{y} \), \( p_{z} \) are the \( x \), \( y \) and \( z \) components of the particle's momentum and \( E \) is the particle's energy. \( \theta \) is the polar angle measured from the z axis around the x axis and is often expressed in terms of pseudo-rapidity \( \eta = -\ln(\tan\frac{\theta}{2}) \), which equals the rapidity \( y = \frac{1}{2}\ln \frac{E-p_{z}}{E+p_{z}} \) in the limit of small masses. Differences in rapidity are Lorentz-invariant under boosts along the \( z \) direction. The azimuthal angle \( \phi \) = tan \( ^{-1} \) ( \( p_{y} \) / \( p_{x} \) ) is measured from the positive \( x \) axis clockwise around the z axis when facing the positive \( z \) direction. Typically distance in the \( \eta-\phi \) plane is expressed in terms of \( \Delta R = \sqrt{\Delta\eta^{2} + \Delta\phi^{2}} \).

The Inner Detector

The inner detector's [14] [15] purpose is to accurately reconstruct charged tracks in and around the interaction point of ATLAS, which will see of the order to 1000 tracks/25 ns. More specifically, it must provide robust pattern recognition, measure track momentum and make primary and secondary vertex measurements (in order to enable identification of jets associated with decays of b hadrons, b-tagged jets).Calorimetry

ATLAS calorimetry is designed to measure the energy of electrons, photons and hadrons. It is divided into three main parts, the electromagnetic calorimeter (ECAL), the hadronic calorimeter (HCAL) and the forward calorimeters (FCAL). They utilize different detector technologies to fulfil good energy and position resolutions and provide large hermetic coverage required for measurement of missing energy (\( \etmiss \)), resulting when weakly interacting (\( \nu \)) particles escape the detector undetected. In addition, they minimize hadron punch-through to the muon system. Each of the ATLAS calorimeters is a sampling calorimeter, i.e. they periodically sample or measure the energy of a traversing particle. To achieve this, each is composed of alternating layers of a dense absorber medium which causes traversing particles to shower and a sampling medium used to measure the energy of the resulting showers.

Muon Spectrometer

The purpose of the ATLAS muon spectrometer [16] is to provide trigger and bunch crossing identification of events with high \( \pt \) muons as well as high precision standalone momentum and position measurement of muons.Trigger and Data Aquisition

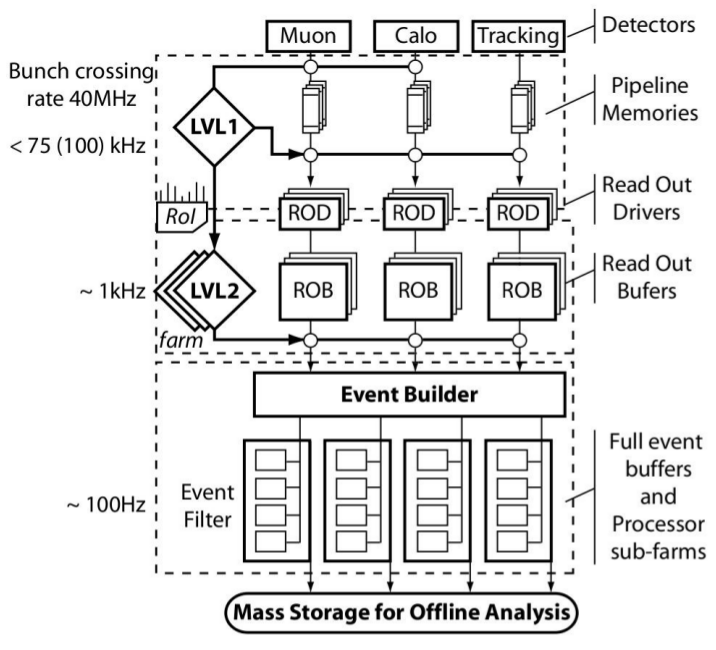

The design luminosity of the LHC ( \( \mathcal{L} \) = 10 \( ^{34} \Lum \)) will give rise to 40 million bunch crossings occurring each second. It is expected that the total event rate after accounting for multiple interactions per bunch crossing will be of the order of 1 GHz, with a typical event size of the order of 1.5 MB. At the time of writing, technological limits placed a restriction on the speed of recording events to disk at the level of \( \approx \) 300 MB/s, thereby restricting the maximum rate of storing events to approximately 200 Hz. The ATLAS Trigger and Data aquisition (TDAQ) system is designed to facilitate the reduction of the event rate from the raw value of 1 GHz to 200 Hz and in doing so retain as many of the 'interesting' physics events (i.e. those relating to the goals of ATLAS outlined in the section The ATLAS Detector) as is possible. The Trigger system is based on three levels: Level 1, Level 2 and Event Filter, as illustrated in Figure 10. Level 2 and Event Filter together constitute what is termed the High Level Trigger (HLT) [17].Figure 10: Overview of ATLAS Triggering system [18].

Signal and Background Processes

Signal Processes

The signal channel investigated in this study is a SM Higgs boson decaying to two Z bosons. As was shown in the section The Higgs Mechanism this decay mode is one of the dominant ones over a large range of high Higgs masses. The decay of the Z bosons considered is with one Z decaying to leptons and the other to neutrinos. Where one of the Z bosons decays to electrons(muons) this will subsequently be referred to as the electron(muon) channel (or \( \ztoee \) ( \( \ztomm \) ) channel). An individual channel is not considered for the case of the \( \tau \) lepton, but since \( \tau \) decays typically involve electrons/muons, in this sense they are included. From Figure 2, which gives a breakdown and branching ratios of the main Z decay modes, the main decays in this Higgs channel that include one Z decaying to leptons for triggering purposes are Z decays to leptons, leptons+jets and leptons+neutrinos. The decay to four leptons provides a signal which is the most easily identified in the detector, due to the presence of four high \( \pt \) leptons. However, it has the lowest BR, less than 0.1 \( \% \). In contrast the decay to leptons+jets has a much larger BR, \( \approx \) 14 \( \% \), but the presence of two jets makes it less easy to identify in the busy hadronic environment within ATLAS during data taking. The lepton+neutrino final state is perhaps a compromise between these. It has a BR in between the purely leptonic final state and the leptons+jets final state of \( \approx \) 4 \( \% \).

1: Here, and subsequently, lepton refers to either an electron or a muon.

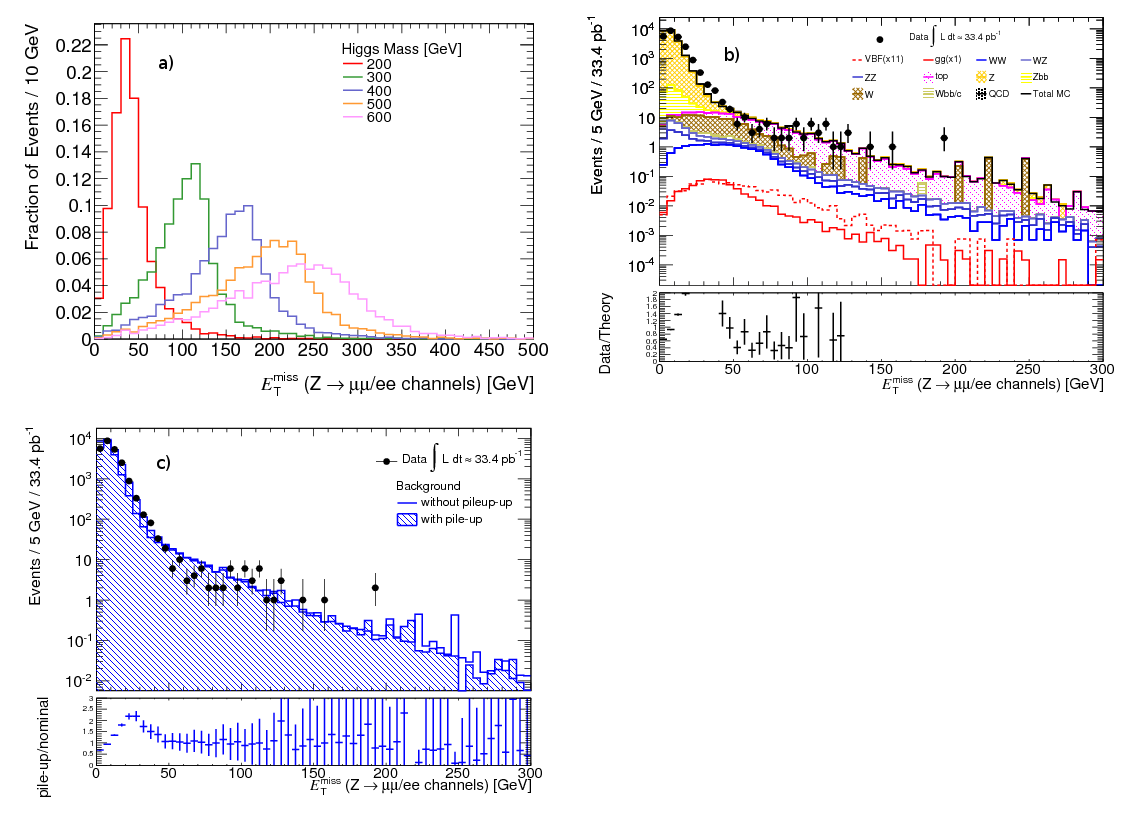

The lepton+neutrino final state has missing energy coming from the two neutrinos in the final state, which provides a good way to discriminate over background. This was demonstrated in studies performed in this channel using the GF production mode [19] [20], which showed that particularly at high mass where, because of the Higgs decay products becoming more boosted with increasing mass, the large missing energy present in the signal gives good sensitivity. At lower Higgs mass however, the signal has much lower missing energy making discrimination against background more difficult.

This study takes a first dedicated look at the VBF production mode, in particular to see if the characteristics associated with the VBF topology in this decay channel may provide an improvement in sensitivity. However, focussing on the VBF production mode is also motivated as within the SM it is predicted to provide a different mechanism by which a Higgs type boson could be produced, compared to the cross section dominant GF mode, and so must be studied in order to verify if this prediction is correct. Further, the VBF production mechanism provides access to different couplings compared to the GF mode [21], which will need to be studied in order to cross check our understanding of the mechanism through which the weak-gauge boson and fermion masses are generated.

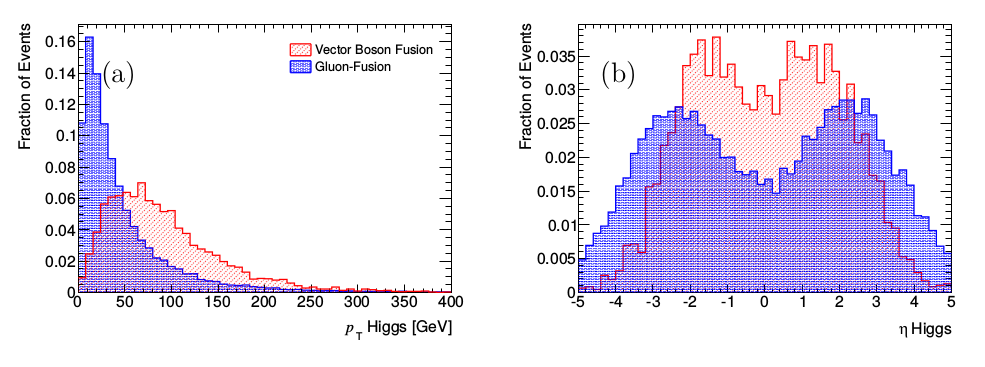

Figure 11: Comparison of Higgs boson (a) \( \pt \) and (b) \( \eta \) in vector boson fusion and gluon fusion produced truth signal events

Typically the \( \pt \) of a Higgs boson produced in a VBF event is harder than that in a GF event, as can be seen in Figure 11, which uses Monte Carlo truth level (i.e. neglecting detector effects and allowing access to properties of underlying process) information in \( \htollnunu \) events. In addition it is more commonly in the central regions of the detector. These properties are a consequence of the Higgs boson recoiling off the typically forward tag-jets associated with the remnants of the VBF process (corresponding to the outgoing quarks produced in association with the Higgs boson as shown in Figure 6). Thus, the experimental signature of the signal in the VBF production mode is two hard isolated leptons, missing energy and tag-jets produced from the remnants of the VBF process.

Background Processes

The signal is expected to suffer from various SM background processes, which can be categorised into the following

- top production via SM interactions

- Single and di-boson production (with heavy quarks or jets)

- QCD dijet and heavy quark production

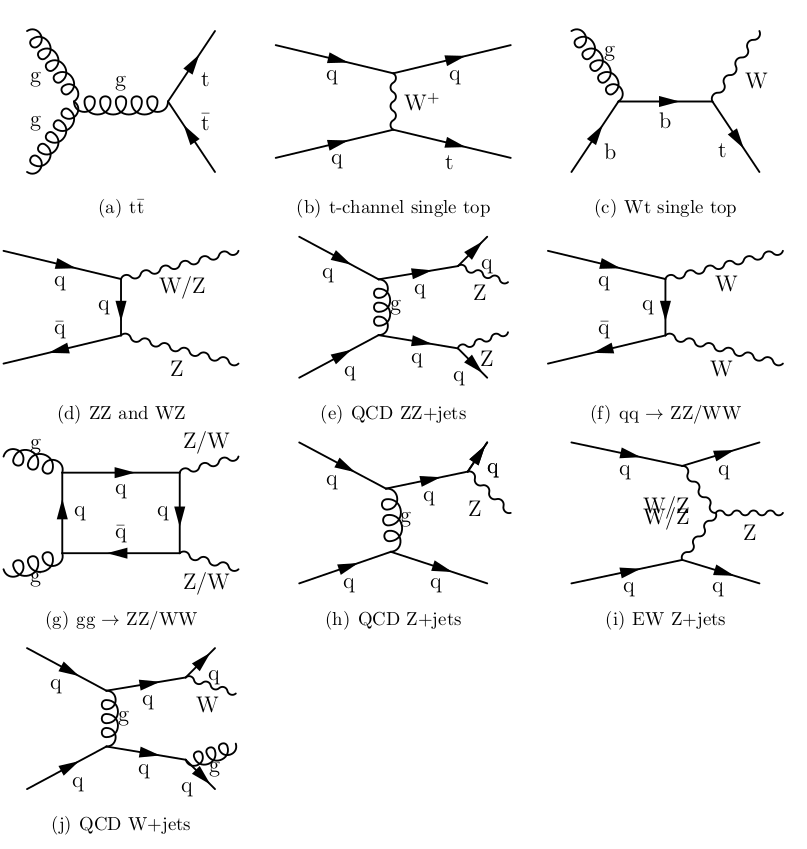

Figure 12: Feynman Diagrams of main backgrounds

Background example: Top Pair Production

The main decay modes of \( \toptop \) (Figure 12 a)) expected to contribute to the background to this analysis are where both of the Ws decay leptonically (lepton-lepton (ll) channel) and where one W decays leptonically and the other hadronically (lepton-hadron (lh) channel), making up \( \approx \) 6(34) \( \% \) of all \( \toptop \) decays respectively. The lepton-lepton channel final state contains two leptons, missing energy and two b-quark jets. This becomes a background when the detector fails to identify both of the b-quark jets either because they lie outside the acceptance of the tracker or because these jets don't pass the b-tagging criteria. The lepton-hadron channel becomes a background when one of the jets is misidentified as a lepton and the b-quark jets are not b-tagged. Because the top quarks recoil against each other when they are produced, the characteristics of the tag-jets in the signal may not provide much suppression of this background.

Phenomenology at hadron colliders and Monte Carlo simulation

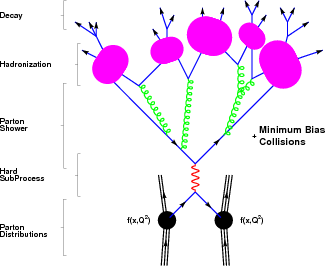

The description of the Higgs signal and the explanation of its background processes earlier in this section do not take into account the fact that the LHC collides composite protons (\( A,B \)). In order to model the interaction of composite protons at the energies produced by the LHC it is useful to consider the parton model in which the proton is made up of constituent partons (\( a,b \)). In this model the interaction of individual partons leads to the production of other particles such as the Higgs boson (\( c \)), the production cross section of which, \( d\sigma_{a+b\rightarrow c} \), can be found using the SM. In addition other remnants (\( Z \)) will be produced. The calculation of the hadronic cross section (\( d\sigma_{A+B\rightarrow c+Z} \)) however, must be calculated within the parton model according to $$ \begin{equation} d\sigma_{A+B\rightarrow c+Z} = \sum_{a,b} \int_{0}^{1} dx_{a} \int_{0}^{1} dx_{b} f^{a}_{A}(x_{a},Q^{2})f^{b}_{B}(x_{b},Q^{2})d\sigma_{a+b\rightarrow c} \label{BasicLHCProcess} \end{equation} $$ where the sum is over all processes (i.e. Feynman diagrams) contributing to the production of \( c \). \( f^{a}_{A} \) and \( f^{b}_{B} \) are called parton distribution functions (PDFs) and correspond to the probability to have a parton \( a \) with momentum fraction \( x_{a} \) within its parent proton \( A \) at energy scale \( Q \). These cannot be calculated from first principles but must be measured for example in deep inelastic scattering experiments. In this way the total hadronic cross section is composed of two parts, a perturbative short distance scale part and a non-perturbative long distance scale part. The procedure of separating the interaction like this is called factorization and the energy scale at which this is done is called the factorization scale. A diagram showing the different processes occurring when high energy protons collide is shown in Figure 13.Figure 13: Phenomenological model of the interaction of a proton-proton collision at high energy scale. [22]

The interaction of the protons leading to, for example, the production of the Higgs boson represented by \( d\sigma_{A+B\rightarrow c+Z} \) is calculated within the parton model and corresponds to the hard sub-process. All other contributions to the final state not originating from the hard sub-process are called the underlying event. This includes initial state radiation (ISR) produced via emission from the incoming partons, interactions between the proton remnants (i.e. partons other than those in the hard interaction) and any final state radiation (FSR) from the final state particles. Because the final state partons carry colour charge they often radiate gluons, leading to production of quark anti-quark pairs. This gives rise to cascades of partons called parton showers. Once energetically favourable, the partons produced in such showers form colour neutral states in the process of hadronization. The decay products of these states are then subsequently measured in the detector. Understanding of parton showering and hadronization is achieved using dedicated models.

The most common technique to model physical processes is to use Monte Carlo methods. Monte Carlo methods use pseudo-random numbers to model particle interactions based on the underlying physical principles beyond the scope of this document. In particle physics this procedure is generally referred to as event generation and the program using the Monte Carlo methods is called the Monte Carlo generator. It allows the kinematics of any final state particles to be calculated given an input process and a set of initial starting conditions. The final state particles correspond to those that are stable in the sense that the distance they travel in the associated particle's proper lifetime is within a suitably large range. Some Monte Carlo generators simulate specific final states (called matrix element generators). This is typically done by summing over all relevant Feynman diagrams. An example is MC@NLO [23]. Other generators simulate non perturbative effects including hadronisation. An example is PYTHIA [24]. Details of the particles produced in the event generation are provided in the Monte Carlo \( truth \) information.

All Monte Carlo officially produced for the ATLAS Collaboration is produced within the ATHENA framework. This is a software framework which provides interfaces to all the generators used. In order to get a realistic picture of what we would expect to see in the detector when a particular process occurs, the interactions of the final state particles and the detector material are modelled. This procedure is done with the GEANT program and also performed in the ATHENA framework. Two approaches to this exist and are referred to as fast/full simulation respectively, depending on the level of detail at which the detector simulation is done.

Monte Carlo Samples

The Monte Carlo event samples used in this study were officially produced within the ATLAS Collaboration, using version 15.6.3 of the ATHENA framework. They were fully simulated using GEANT 4 and correspond to p-p collisions at \( \sqrt{s} \) = 7 \( \TeV \). The default samples used in this analysis do not model pile-up and are used to obtain the main results. This choice was made because of insufficient statistics in some of the pile-up samples for the backgrounds considered and because not all the processes considered were simulated taking pile-up into account. However, where possible the effect of pile-up was investigated. Where comparisons to pile-up Monte Carlo are made, this is explicitly stated and the pile-up Monte Carlo is referred to as such. The term Monte Carlo used without mention of pile-up corresponds to the non pile-up Monte Carlo. The pile-up samples considered were simulated with two interactions per bunch crossing. In the following the main properties of the simulated samples used will be discussed (signal and an example of one of the backgrounds).

Signal

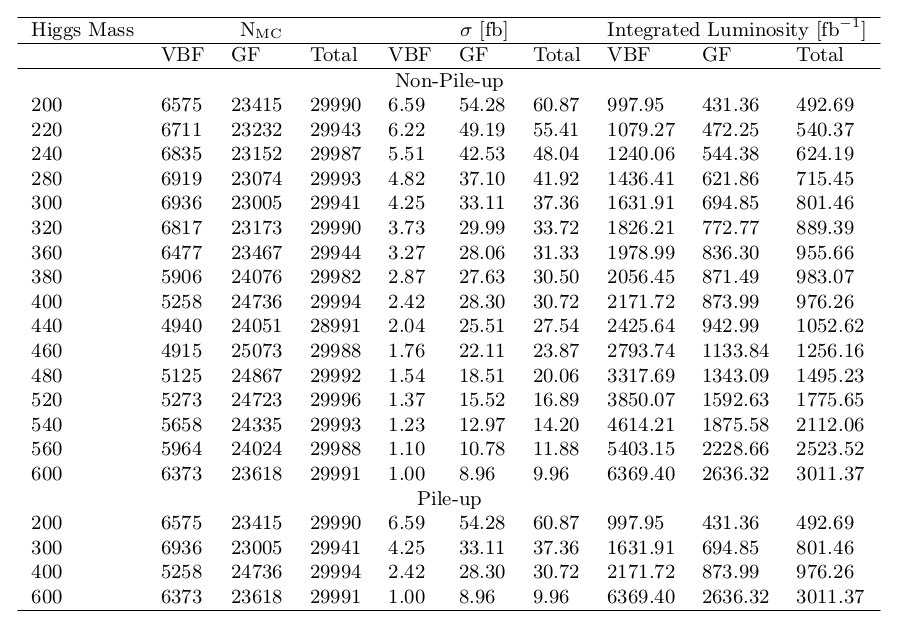

The signal samples used were generated with PYTHIA 6.421 [24]. Z decays to leptons included electrons, muons and taus in their corresponding branching fractions. ISR was modelled with PHOTOS [25] and the decay of tau leptons by TAUOLA [26]. The events include both VBF and GF contributions. The cross sections used are from [8], with GF quoted at NNLO and VBF at NLO. The analysis uses signal samples simulated for Higgs masses between \( \mH \) = 200-600 \( \Gcs \) in 20 \( \Gcs \) steps. The main properties of the signal samples used, including number of simulated events, cross section and the corresponding integrated luminosity are shown in Figure 14. A break-down of the VBF and GF components is shown. Separation of the samples into VBF and GF components was done using truth information. This was done by identifying the Higgs boson and then navigating backwards to identify if it was derived from gluons or quarks and so produced by GF or VBF. The identity of each particle was found using the PDG particle codes [4]. In order to verify that the separation of VBF and GF events was done correctly the fraction of VBF events obtained was compared to that produced in a statistically independent sample of \( \htollnunu \) produced with the same configuration options as the official ATLAS Monte Carlo. Agreement was found to within 1 \( \% \) for the range of mass samples tested ( \( \mH \) = 200-600 \( \Gcs \) in 100 \( \Gcs \) steps) indicating that the separation of VBF and GF was performed correctly.Figure 14: Summary of signal Monte Carlo sample properties as a function of Higgs mass used in this analysis, including number of simulated events (N \( _{\mathrm{MC}} \) ), cross section ( \( \sigma \) [fb]) and corresponding integrated luminosity [ \( \fb \) ]. Included are the relative contributions from VBF and GF production mechanisms. The samples were generated with PYTHIA. Pile-up samples were simulated with two interactions per bunch crossing.

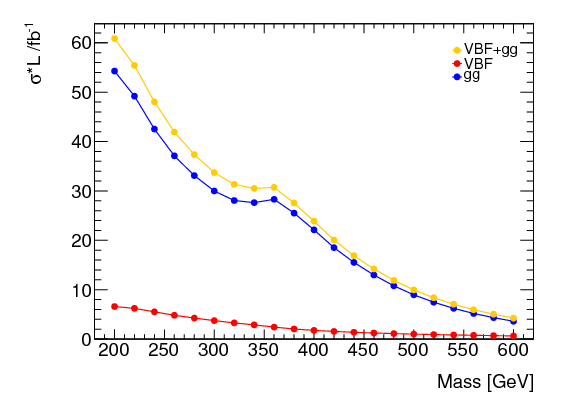

A comparison between VBF and GF cross sections at \( \sqrt{s} \) = 7 \( \TeV \) with varying Higgs mass is shown in Figure 15. The cross section is reduced by a factor six with increasing mass in the mass range investigated.

Figure 15: Comparison of predicted number of VBF and GF events produced at \( \sqrt{s} \) = 7 \( \TeV \) for 1 \( \fb \) as a function of Higgs mass.

Background example (Z+jets)

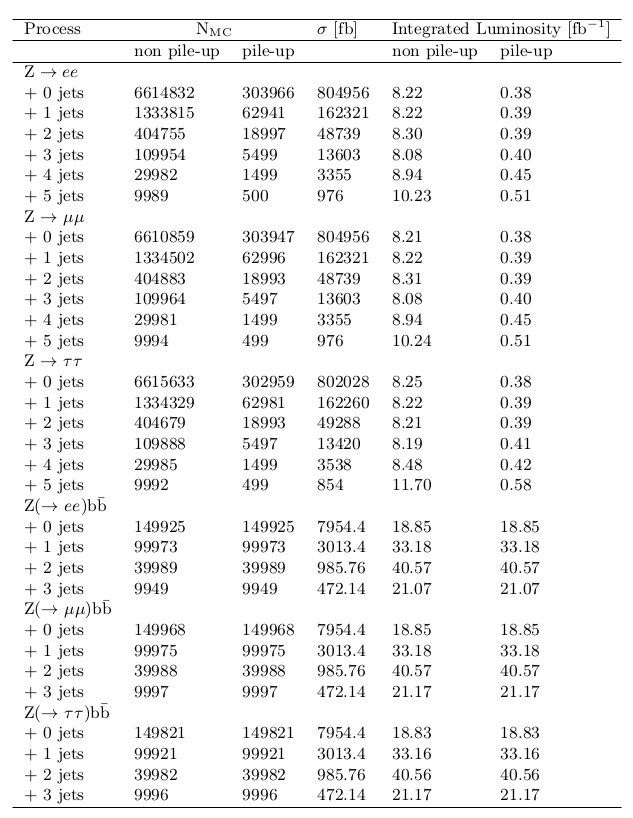

Z+jets samples were simulated with ALPGEN v2.13 [27] interfaced with HERWIG v6.510 [28] for modelling of parton showers and hadronization. The simulated samples include generated hard matrix elements for Z and Z \( \bb \) with additional numbers of partons in the final state: 0-5 partons for the Z samples and 0-3 partons for the Z \( \bb \) samples. In each case Z decays to \( ee,\mu\mu \) and \( \tau\tau \) are considered. The cross sections were taken from the generator prediction. A k-factor of 1.22 [13] is used to scale the LO generator cross section to NLO. Details of the Z+jets samples used are shown in Figure 16.

Figure 16: Summary of Z+jets Monte Carlo sample properties used in this analysis, including number of simulated events (N \( _{\mathrm{MC}} \) ), cross section ( \( \sigma \) [fb]) and corresponding integrated luminosity [ \( \fb \) ]. The samples were generated with ALPGEN interfaced with HERWIG.

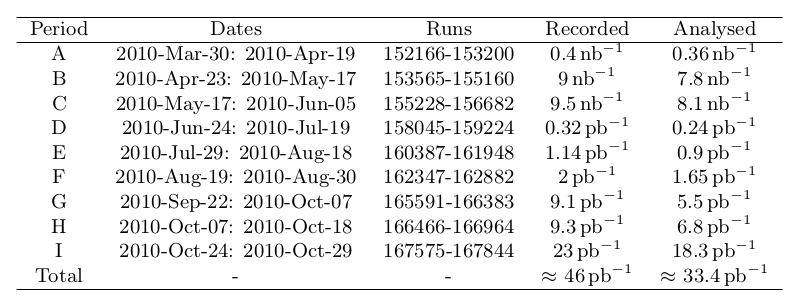

p-p Collision Data

The real p-p collision data used in this study is from the ATLAS 2010 dataset, in which the LHC was operated at \( \sqrt{s} \) = 7 \( \TeV \) between 30 \( ^{th} \) Mar-29 \( ^{th} \) Oct 2010. During this time a total integrated luminosity of 48.8 \( \pb \) was delivered to ATLAS, of which 46.72 \( \pb \) was recorded. During the 2010 data taking period, the data taking sessions were divided up into periods, with each period made of a number of runs, each given a unique identifying number. A typical run involves a period of stable data taking whereby typically, beams are injected into the LHC, stable beams are declared and data taking is maintained until beams are dumped or lost. The data in 2010 is composed of periods A to I. Each run is composed of fixed luminosity intervals called lumi-blocks. Within ATLAS, for each lumi-block a set of indicators called data quality flags are used to identify beam and detector conditions. This information is exercised by physics analysers who use 'Good Run Lists' (GRLs) to specify which quality flags they require to be passed for their analysis. For the purpose of this study, only runs passing certain quality requirements/flags were taken into account. In particular the following flags were required to be passed:- ATLGL- requires that the run has been evaluated by the data quality group.

- LUMI- requires luminosity and forward detectors are operational and luminosity is correctly calculated.

- ATLSOL, ATLTOR- requires the currents in the solenoid and toroid magnets to be stable.

- L1CTP, L1CAL, L1MUB, L1MUE- requires the Level 1 Central Trigger Processor, calorimeter trigger and barrel/end cap muon triggers are performing with a good efficiency and there are no timing, synchronisation or data flow problems.

- cp_eg_electron_barrel, cp_eg_electron_endcap, cp_mu_mstaco, cp_mu_mmuidcb, cp_jet_jetb, cp_jet_jetea, cp_jet_jetec- are required to allow measurement of electrons, muons and jets. cp_met_metcalo, cp_met_metmuon- are required for \( \etmiss \) measurement (the latter was not required for periods A-C since this flag was not present).

- cp_tracking- requires inner detector in good working order.

- cp_btag_life- requires detector sub-systems required for b-tagging measurements are operable (not required for periods A-C since this flag was not present).

Figure 17: Details of the data used in this analysis.

Physics Objects

In essense, the different parts of the detector are designed to detect different physics objects (jets, leptons and neutrinos). However, this is done through reconstruction and identification methods, as the detector components themselves, more often than not through some physical process (often the particle in question reacting in some way with a particular component of the detector), measure electrical raw signals. The aim of the "reconstruction" of physics objects is to as accurately as possible reconstruct, on an event by event basis, the truth particles within the Monte Carlo simulation and the actual particles produced in real proton-proton collisions from the raw signals from the sub-detectors. Within ATLAS, this procedure is performed within the ATHENA framework (version 15.6.13 was used in this study). Because of limitations in the measurement accuracy of the detector sub-systems or approximations in the algorithms used to perform the reconstruction process, a 100 \( \% \) accurate reconstruction is not achievable. For example a particle may be reconstructed as the wrong type or not be detected at all. In order to quantify the level of this inconsistency the definitions of reconstruction efficiency and misidentification rate are commonly used. The reconstruction efficiency \( \epsilon \) of a particle represents the fraction of true particles (in this study corresponding to the Monte Carlo truth particles) that are correctly reconstructed as that type of physics object. In this case the reconstruction efficiency can be expressed as shown in Eqn. \eqref{eqn_identificationEffic}, $$ \begin{equation} \epsilon = \frac{N^{matched}}{N^{all}} \label{eqn_identificationEffic} \end{equation} $$

where \( N^{all} \) is the total number of Monte Carlo truth particles and \( N^{matched} \) is the number of these that \( match \) a reconstructed object of the same type.

In order to quantify the rate at which a particle is mis-identified, the mis-identification rate (\( \chi \)) shown in Eqn. \eqref{eqn_identificationFakeRate} is defined as the number of reconstructed objects not matched to a truth particle of the same type (\( N^{not-matched} \)) divided by the total number of reconstructed objects (\( N^{all-reconstructed} \)). $$ \begin{equation} \chi = \frac{N^{not-matched}}{N^{all-reconstructed}} \label{eqn_identificationFakeRate} \end{equation} $$

The signal within this analysis contains electrons, muons and jets and so it is particularly important that these objects are well reconstructed. To this end, the efficiency and mis-identification rates in the signal have been studied and compared to the performance of some of the main backgrounds in this analysis. The matching criteria used is a geometrical matching requiring the \( \Delta R \) between the truth particle and reconstructed object to be less than 0.02 for electrons and muons and 0.1 for jets.

First, as an example, the methods used to reconstruct electrons are discussed. Then any specific requirements for the objects used in the analysis are defined and examples of the performance of reconstruction of the various physics objects is summarised.

Electrons

The high rates expected from the vast QCD background at the LHC will make it difficult to correctly reconstruct and identify electrons over the broad \( \pt \) range they will be produced in by the physics channels of interest. Within the \( \pt \) range 20-50 \( \Gc \), the rate of production of isolated electrons compared to QCD jets will be below 10 \( ^{-5} \). Although this effect is reduced at higher energy, high jet-rejection is required.

Electron Reconstruction

Electrons are reconstructed using both calorimeter and inner detector information in ATLAS. There are two main algorithms for reconstruction within the inner detector acceptance (\( |\eta| < \) 2.5). The EGAMMA algorithm is designed to reconstruct high \( \et \) isolated electrons. It is seeded by energy deposits in cells in the electromagnetic calorimeter and then searches for a matching track in the inner detector. The \( softe \) algorithm is optimized to reconstruct soft (low \( \pt \)) electrons and is seeded by an inner detector track. It then searches for a matching EM cluster in the electromagnetic calorimeter. The electrons in the H \( \rightarrow \) ZZ \( \rightarrow \ell\ell\nu\nu \) signal are expected to be energetic so only electrons reconstructed with the EGAMMA algorithm are used and are discussed in the following.

Electron Identification

After a candidate electron has been reconstructed, an electron identification procedure is applied to establish its reconstruction quality. Currently the default identification procedure applies a series of cuts related to shower shape, tracking and cluster-track matching variables (which are optimized according to \( \et \) and \( \eta \)). Three standard electron definitions are used: \( Loose \), \( Medium \) and \( Tight \), each corresponding to an increasingly selective set of cuts, whereby each definition includes the cuts of the looser definitions.

Electrons used in this analysis

Electrons used in this analysis are reconstructed with the standard EGAMMA algorithm and are required to have an electron track within the acceptance of the tracker and electromagnetic calorimeter and electron energy measured in the calorimeter \( \et \) > 20 \( \GeV \). Electrons are required to pass the \( Robust \) - \( Medium \) identification cuts. \( Robust \) - \( Medium \) electrons correspond to \( Medium \) electrons, with a few changes that were introduced by the \( e \gamma \) performance group in order to maintain the robustness of electron identification by accounting for discrepancies between Monte Carlo and 2010 data. In particular the electron shower shapes were shown to be wider in data compared to Monte Carlo and as such cuts on \( R_{\eta} \) and \( w_{\eta2} \) are loosened. Further the hadronic leakage cut is modified due to a change in the modelling of the hadronic calorimeter noise (in early data it is modelled with a wider double gaussian). Any reference to electrons now refers to the \( Robust \) - \( Medium \) definition [29].

During the 2010 data taking period a number of cells in the electromagnetic calorimeter were lost because of problems in its readout electronics. In order to account for this effect, which is not taken into account in the Monte Carlo samples used, the (OTX) procedure to remove any electrons in the regions around the lost cells is implemented. In order to identify the lost cells the database corresponding to that at the end of the 2010 data taking period (from Run 167521) is used.

A number of additional corrections [30] are applied to the data and Monte Carlo which have been recommended by the \( e \gamma \) performance group in order to improve the agreement between them. The energy scale of electrons in data is corrected by the expression \( E_{Corrected} = E_{Original}/(1+scale) \) where \( scale \) is equal to -0.0096 if \( |\eta_{cluster}| \) < 1.4 and 0.0189 for 1.4 < \( |\eta_{cluster}| \) < 2.5, where \( \eta_{cluster} \) is the electron cluster \( \eta \). In order to maximise the reconstruction efficiency electrons within the crack region between 1.37 < \( |\eta| \) < 1.52 are used. For the \( \mH \) = 200 \( \Gcs \) signal this was shown to provide an increase in VBF statistics by approximately 8 \( \% \). The energy of electrons in the crack is scaled by 5 \( \% \) in the data and 3 \( \% \) in the Monte Carlo. In addition, the identification efficiency of electrons measured in \( \ztoee \) and \( \W \rightarrow e \nu \) suggests that for \( Robust \) - \( Medium \) electrons Monte Carlo overestimates the efficiency. This is corrected for by weighting the Monte Carlo with \( \eta \) dependent scale factors [31].

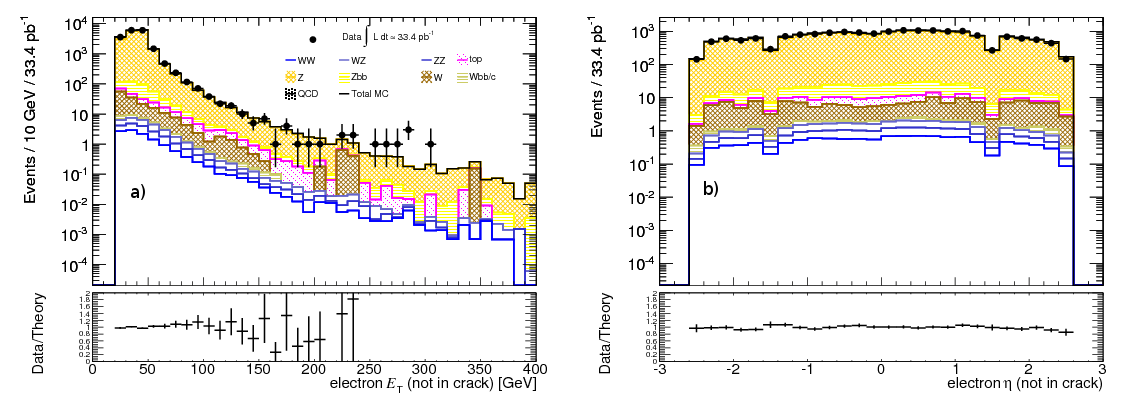

Overall performance of electron identification with corrections

The efficiency of the electron selection used in this analysis for the VBF \( \mH \) = 200 \( \Gcs \) signal sample and two different backgrounds which are expected to be a source of relatively isolated electrons ( \( \ztoee \) ) and non-isolated electrons (\( \toptop \)) was investigated. The efficiency is measured by comparing reconstructed electrons with respect to Monte Carlo truth level electrons with \( \et \) > 20 \( \GeV \) and \( |\eta| < \) 2.5, using a matching criteria of \( \Delta R < \) 0.02. As expected the source of isolated electrons \( \ztoee \) has the largest efficiency for the EGAMMA algorithm. However, it is promising that the signal shows a larger efficiency compared to the source of relatively non-isolated electrons, \( \toptop \), by nearly 20 \( \% \). Furthermore, requiring the \( Robust \) - \( Medium \) identification, the efficiency for the signal and the \( \ztoee \) reduces by approximately 10 \( \% \), whereas for the \( \toptop \) this is closer to 20 \( \% \). Beyond this level, the cuts applied have a similar effect on each sample as expected.A comparison of data and Monte Carlo of some basic electron variables including electron \( \et \) and \( \eta \) (without crack electrons) is shown in Figure 18. As with all subsequent object distributions in this section, the plot shows the total background Monte Carlo distribution, represented by the total MC line together with the contribution of each background considered.The dataset analysed is added and shown by the circular markers. These distributions are made with the lepton requirements detailed in the section Lepton Selection. Good agreement is found between data and Monte Carlo for non-crack electrons while despite the corrections applied for crack electrons, they are underestimated at low \( \et \) in the Monte Carlo.

The electron identification efficiency and mis-identification rates as a function of electron \( \et \) and \( |\eta| \) were also investigated. Included is a comparison of the \( \mH \) = 200 \( \Gcs \) signal, \( \ztoee \) and \( \toptop \). Reconstructed electrons are required to pass the selection criteria outlined previously while Monte Carlo truth electrons must have \( |\eta| < \) 2.5 and \( \et > \) 22(18) \( \GeV \) for the efficiency(mis-identification) calculation respectively, in order to account for resolution effects. Electron efficiencies are observed to increase with \( \et \). With \( \eta \) the reconstruction efficiency is fairly constant although between 1.37 < \( |\eta| \) < 1.52 it drops because of the electromagnetic barrel-end-cap transition. At higher \( \eta \) reconstruction efficiency worsens due to poorer tracking performance in the forward regions. Comparing the different samples included, it is seen that the source of non-isolated electrons \( \toptop \) shows a lower reconstruction efficiency than the signal and the source of isolated electrons a higher efficiency. This trend is seen as a function of \( \et \) and \( |\eta| \).

The VBF component of the signal shows a larger mis-identification rate compared to the GF component. This is attributed to the VBF component being a source of less isolated electrons. For the same reason, the mis-identification rate for \( \toptop \) is larger than that for \( \ztoee \). As expected the mis-identification rates reduce with \( \et \) due to the higher identification efficiency with increasing \( \et \). The trend in \( |\eta| \) is approximately constant but is reduced in the crack region because of decreased efficiency.

Figure 18: Comparison of data and Monte Carlo a) \( \et \) and b) \( \eta \) distributions for electrons (not in crack) used in this analysis.

Muons

Muons are reconstructed from the tracks they produce in the Inner Detector and Muon Spectrometer. Three different types of muon object can be reconstructed depending on the availability of information from these detector sub-systems. Standalone muons are reconstructed from a Muon Spectrometer track over its acceptance (\( |\eta| < \) 2.7). Combined Muons are reconstructed over the acceptance of the Inner Detector (\( |\eta| < \) 2.5), by matching a standalone muon to an Inner Detector track and combining the measurements. Segment tagged muons are reconstructed from an Inner Detector track matched to a short Muon Spectrometer track, typically within one innermost station (called a segment).Muons used in this analysis

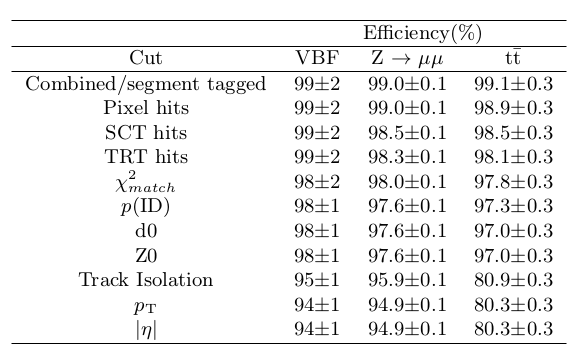

In this study, combined and segment tagged muons from the STACO family are used. Muons must have a \( \pt > \) 20 \( \Gc \) and \( |\eta| < \) 2.5. Muon tracks are required to be isolated by requiring the sum of the momenta of all tracks with \( \pt > \) 1 \( \Gc \) in a cone \( \Delta R \) = 0.2 around the muon to be less than 0.8 \( \Gc \). Additional requirements are made on the muon quality subject to recommendations from the muon combined performance group guidelines [32] which are largely to protect against muon solenoid - inner detector track mis-matching. As such identified muons are required to have traversed all the inner detectors sub-detectors, depositing \( \geq \) 1 pixel hits, \( \geq \) 6 SCT hits on the muon track. Within the acceptance of the TRT, hits are required as follows. Defining n \( _{TRThits} \) as the number of TRT hits on the muon track and n \( _{TRToutliers} \) the number of TRT outliers on the muon track, for \( \eta < \) 1.9 the sum of n \( _{TRThits} \) and n \( _{TRToutliers} \) (n) is required to be greater than 5 and n \( _{TRToutliers} \) < 0.9 n. For \( \eta \) > 1.9, if n > 5, then it is required that n \( _{TRToutliers} \) < 0.9 n. Additionally for combined muons \( \chi^{2}_{match} \) is required to be less than 150 and for tracks with muon spectrometer transverse momentum \( \pt \) (MS) < 50 \( \Gc \) the difference between the extrapolated momentum in the muon spectrometer \( p \) (MS extrap) and the momentum in the inner detector \( p \) (ID) must be greater than 0.4 \( p \) (ID). In order to suppress cosmics the distance of closest approach relative to the primary vertex (the transverse impact parameter, d0) is required to be less than 1 mm and the absolute value relative to the \( z \) vertex at the beam-line (Z0) is required to be less than 1 cm. A number of additional corrections are applied to muons in the Monte Carlo following the recommendations from the muon performance group. The performance of the transverse momentum scale and resolution for combined muons was measured in the data using the di-muon mass distribution in \( \ztomm \) decays [33]. It was found that the muon energy scale is reasonably well described and so no correction is applied. However, it was shown that the resolution in the data is poorer compared to that in the Monte Carlo. The difference is used to define smearing factors for the Monte Carlo for the inner detector and muon spectrometer momentum components. If both the inner detector and muon spectrometer components were measured, the overall transverse momentum of the combined muon is found by weighting the components by their relative resolution. For cases where no measurement was made in the muon spectrometer (inner detector) the muon \( \pt \) is taken to be the smeared inner detector (muon spectrometer) \( \pt \). This procedure was done using the official code provided by the muon performance group. In [34] it is shown that for combined and segment tagged muons, the muon efficiency in the simulation and the data are well matched. Therefore no correction is applied.Overall performance of muon identification with corrections

The efficiency of the muon selection used in this analysis for the \( \mH \) = 200 \( \Gcs \) signal sample and two different backgrounds which are expected to be a source of relatively isolated ( \( \ztomm \) ) and non-isolated (\( \toptop \)) muons are shown in Figure 19. The efficiency is measured by comparing reconstructed muons with respect to Monte Carlo truth level muons with \( \pt \) > 20 \( \Gc \) and \( |\eta| \) < 2.5, using a geometrical matching criteria of dR < 0.02. The efficiency achieved in the signal is close to that in \( \ztomm \) and similar to the value quoted in [34] of 97 \( \% \). The performance for \( \toptop \) is reduced by a further 10 \( \% \) and is shown to be due to the track isolation cut, due to the presence of non-isolated muons in this sample.Figure 19: Efficiency of Muon selection cuts. Efficiencies are shown for \( \mH \) = 200 \( \Gcs \) VBF \( \htollnunu \) signal sample, \( \ztomm \) and \( \toptop \). Efficiencies are calculated by comparison of Monte Carlo truth and reconstructed muons.

As was done for electrons, a comparison of data and the Monte Carlo prediction for some of the main muon variables was performed. The distributions were made using the lepton requirements detailed in the section Lepton Selection. A good level of agreement was found.

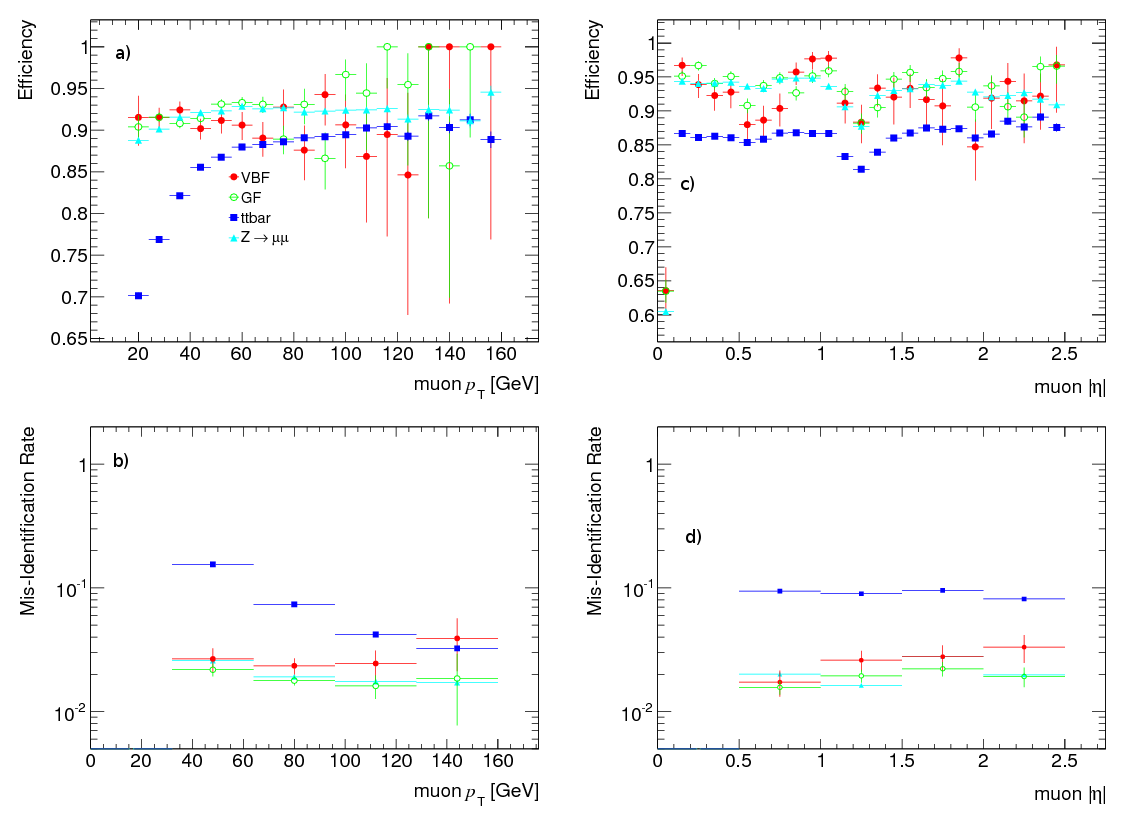

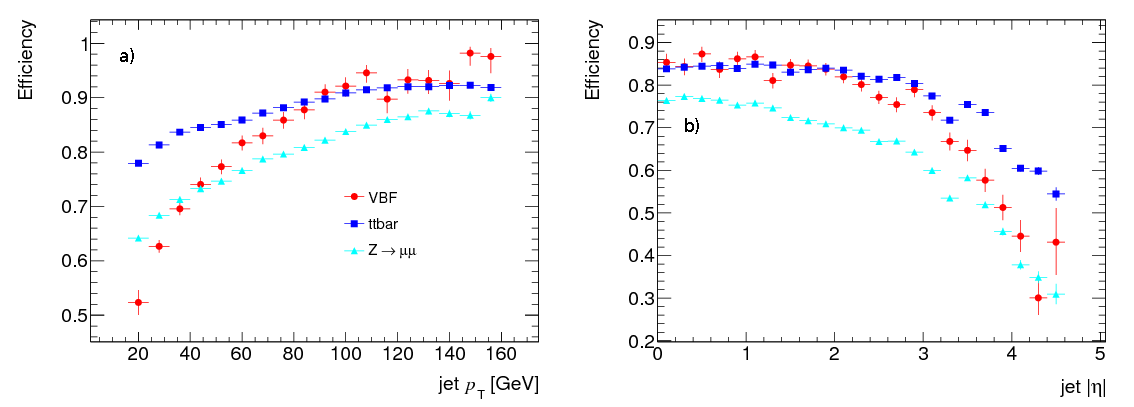

The muon identification efficiency and mis-identification rates for the muon selection adopted in this analysis as a function of muon \( \pt \) and \( |\eta| \) are shown in Figure 20. Included is a comparison of the \( \mH \) = 200 \( \Gcs \) signal, \( \ztomm \) and \( \toptop \) samples. Reconstructed muons must satisfy the muon selection detailed previously and Monte Carlo truth muons \( |\eta| < \) 2.5 and \( \pt > \) 22(18) \( \Gc \) for the efficiency(mis-identification) calculation. Muon efficiencies are observed to increase with \( \pt \) until 60 \( \Gc \) at which point there is a plateau region where they remain approximately constant. With \( \eta \) the reconstruction efficiency rises quickly from low \( \eta \) where the detector acceptance is poor due to the detector support structure. Efficiency is also degraded in the transition region between 1.1 < \( |\eta| \) < 1.7 where there are fewer muon stations. Muon mis-identification rates are highest for the \( \toptop \) due to the presence of non-isolated muons making reconstruction more difficult. At the other extreme, \( \ztomm \) exhibits the lowest mis-identification rate. Although higher than in the GF component of the signal, the mis-identification rate of the VBF signal is lower than that in \( \toptop \). These findings indicate that adopting the selection described, muons can be identified efficiently in the signal and with fewer mistakes compared to a major expected background, \( \toptop \).

Figure 20: Muon reconstruction efficiency (upper plots) and mis-identification rate (lower plots) as a function of (a,b) \( \pt \) and (c,d) \( |\eta| \) for VBF signal (filled circles), GF signal (open circles), \( \toptop \) (filled squares) and \( \ztomm \) (filled triangles) after the pre-selection of muons. Signal components correspond to the \( \mH \) = 200 \( \Gcs \) sample.

Jets

Jets are collimated hadrons produced by energetic partons. They deposit energy in the electromagnetic and hadronic calorimeters and if charged, tracks in the Inner Detector. Jets are reconstructed using calorimeter information, over its full acceptance (\( |\eta| < \) 4.9). They are reconstructed using jet-finding algorithms, of which there are many examples, but each of which reconstructs jets from input objects by combining their four momenta. The input to jet-finding algorithms does not have to be derived from the calorimeter (giving calorimeter jets), tracks or particles from an event generator are possible inputs. In order to reconstruct calorimeter jets, the calorimeter cells are combined into larger objects called calorimeter towers or topological cell clusters, which form the input to the jet-finding algorithms.Jets used in this analysis

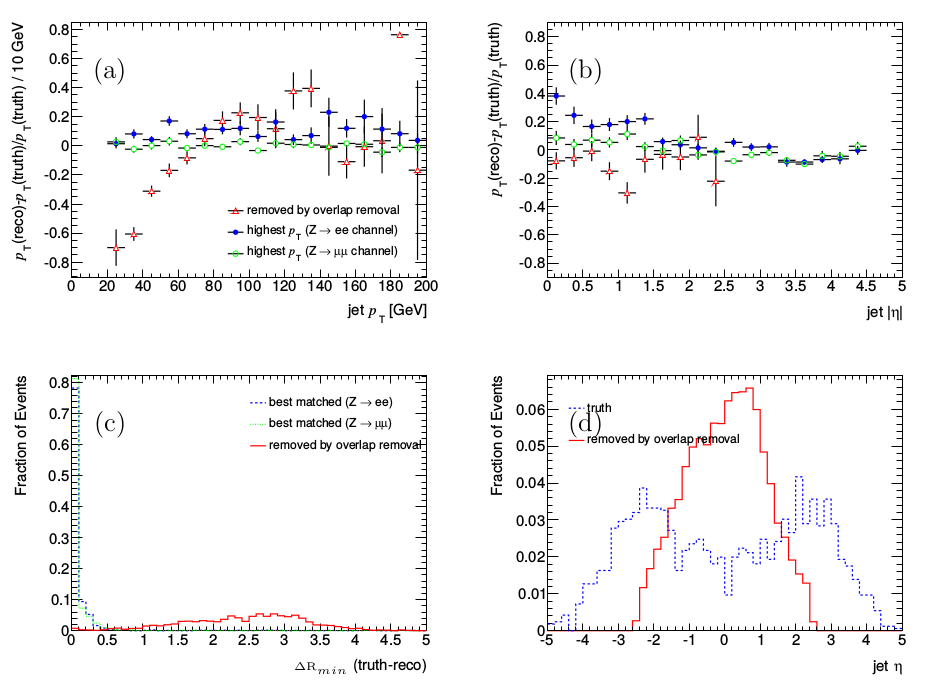

The jets used in this analysis are reconstructed using the anti-\( k_{T} \) algorithm with a distance parameter of 0.4, calibrated using global calibration. Topological clusters are used for the jet algorithm input. The energy scale of jets is corrected from the electromagnetic scale to the hadronic scale using the recommended \( \pt \) and \( \eta \) dependent Jet Energy Scale. This was derived from Monte Carlo but verified with data, and as such is expected to give a more accurate calibration at the time of writing. Jets must have a \( \pt > \) 20 \( \Gc \) and \( |\eta| < \) 4.5. In order to suppress the contribution of a jet in other than the p-p collision from which it originated, at least 75 \( \% \) of any tracks originating from the jet are required to be associated with the primary vertex of the given p-p collision. This is achieved by requiring that the jets have a Jet Vertex fraction \( |JVF| \) > 0.75. In this way the effect of in-time pile-up causing multiple p-p collisions to occur within the same bunch crossing can be reduced.Overall performance of jet identification with corrections

The reconstruction efficiency of jets used in this analysis as a function of \( \pt \) and \( |\eta| \) is shown in Figure 21 for the \( \mH \) = 200 \( \Gcs \) signal, \( \ztomm \) +jets and \( \toptop \) samples. The performance of jet reconstruction in the VBF signal appears to be slightly worse than in \( \toptop \) at low jet \( \pt \) and large \( |\eta| \). In comparison, the performance in \( \ztomm \) +jets is lower compared to that in the signal across the entire \( |\eta| \) range investigated. Overall, for the signal, a jet reconstruction efficiency of over 60 \( \% \) is maintained for jet \( \pt > \) 30 \( \Gc \) and \( |\eta| < \) 3.5.Within ATLAS there are a number of b-tagging algorithms to differentiate the decay of a b-hadron from that containing only light quarks. In this analysis this is particularly important in order to suppress backgrounds such as those with a top quark decay. The b-tagging algorithms use the fact that hadrons with a b quark have a much larger lifetime giving a pronounced decay length c \( \tau\approx \) 450 \( \mu \) m compared to light quark hadrons. They are identified either by using reconstructed secondary vertices from the tracks within a jet or by combining the distance of closest approach to the primary vertex of all tracks in the jet. Within this analysis, decays of b hadrons are identified using the secondary vertex based algorithm SV0 [35]. A jet is called a b-jet if its lifetime-signed decay length significance (b-tag weight) is greater than 5.72 (this follows [36]).

A series of cuts are applied to ensure that the jets used are free from electromagnetic coherent noise bursts, calorimeter spikes and cosmics/ beam background. They follow the recommendation of the Jet \( \etmiss \) group [37] and as such any jet in the data which fails one of the \( loose \) criteria is rejected and not considered further in the analysis. Any event with a \( bad \) jet with \( \pt \) > 20 \( \Gc \) is then rejected. This selection is only implemented on data as the distributions relating to the selections are not well modelled in simulation.

Figure 21: Jet reconstruction efficiency as a function of a) \( \pt \) and b) \( |\eta| \) for VBF component of signal (filled circles), \( \toptop \) (filled squares) and \( \ztomm \) +jets (filled triangles) after the preselection of jets. The signal sample corresponds to \( \mH \) = 200 \( \Gcs \).

Missing Transverse Energy (\( \etmiss \))

Typically weakly interacting particles such as neutrinos will traverse the entire detector volume without leaving any measurable signal. As such they have to be measured indirectly through the imbalance of observed transverse momentum they cause which is commonly expressed through the quantity \( \etmiss \). Reconstruction of \( \etmiss \) in ATLAS [38] [39] is in essence done by summation of all energy deposits in the calorimeter cells and muon tracks. However it is complicated as there are many processes apart from the hard scattering process such as the underlying event, multiple interactions, pile-up and coherent electronics noise, which give rise to such energy deposits and muon tracks. To achieve an accurate measurement of \( \et \) and therefore \( \etmiss \), each component must be correctly calibrated and corrections for energy loss in non-active material have to be accounted for. It is further made difficult due to fake \( \etmiss \) coming from noisy/dead calorimeter cells, badly reconstructed/fake muons and acceptance effects due to lack of detector coverage.

Missing Transverse Energy used in this analysis

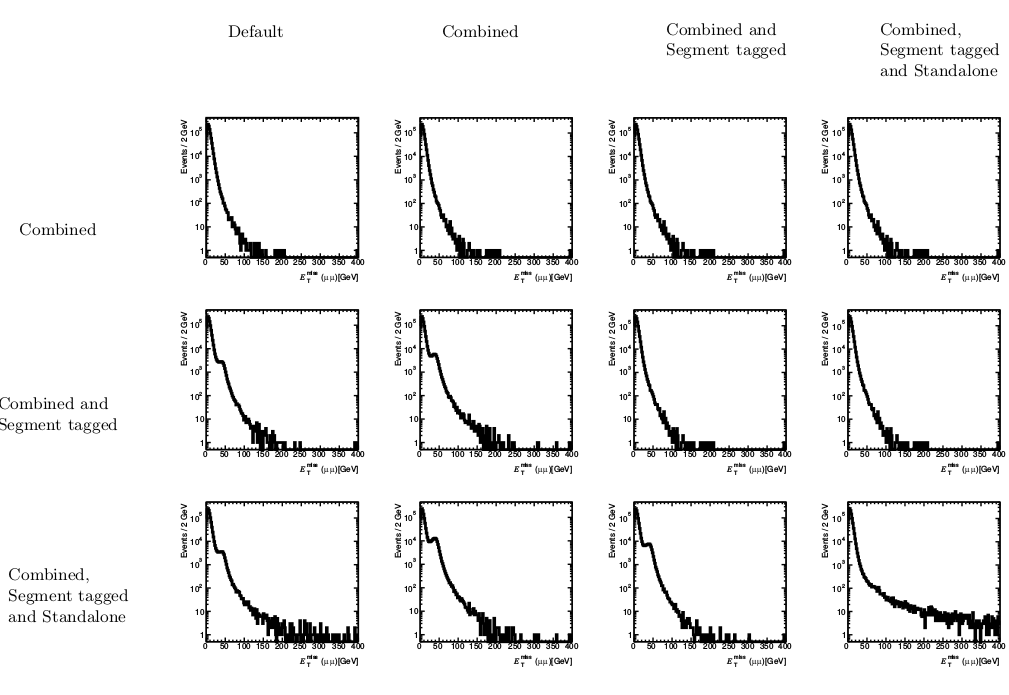

The definition of \( \etmiss \) used in this analysis follows what at the time of writing was currently recommended. It is called MET \( _{LocHadTopo} \) and uses the cell-based approach using TopoClusters within \( |\eta| < \) 4.5. The calorimeter cell energy is calibrated using weights from local hadron calibration of TopoClusters. As recommended, in events where there are muons passing the muon selection criteria outlined, the muon terms ( \( \etmissmuon \) ) in the \( \etmiss \) definition are removed and replaced explicitly with the muons found in the event, in order to avoid double counting. The motivation for this is shown in Figure 22, where the \( \etmiss \) distribution in \( \ztomm \) events is presented when different types of muons are selected (rows) and different types of muon are used in order to correct the \( \etmiss \) distribution (columns). The default \( \etmiss \) variable is plotted in the left-most column. Combined and segment tagged muons are required to have a \( \pt \) > 20 \( \Gc \) and \( |\eta| < \) 2.5 while standalone muons are required to have \( \pt \) > 20 \( \Gc \) and \( |\eta| < \) 2.7. When combined and segment tagged muons are used in the selection (like in this study) if no replacement of the muon terms is made in the \( \etmiss \) expression, then a bump around 50 \( \GeV \) appears in the \( \etmiss \) distribution. This problem is only resolved when the muon terms are replaced explicitly with the combined and segment tagged muons in the event.Figure 22: \( \etmiss \) in \( \ztomm \) events. The rows represent the different types of muons considered. The leftmost column shows the default \( \etmiss \) distribution. Moving from left to right in the columns, shows the effect of replacing the \( \etmissmuon \) term with different types of muons.

Overlap Removal

Reconstruction of jets is based on information from the calorimeter only. As a consequence of this energy depositions produced by electrons and photons can be reconstructed as jets. To avoid using such jets in the analysis which are actually electrons, jets which overlap within \( \Delta R \) < 0.4 with a electron satisfying the electron quality requirements detailed are removed. Likewise, any jet within \( \Delta R < \) 0.4 of a muon satisfying the requirements outlined is removed. Priority is given to muons over electrons and as such any electron within \( \Delta R < \) 0.2 of a muon is removed.

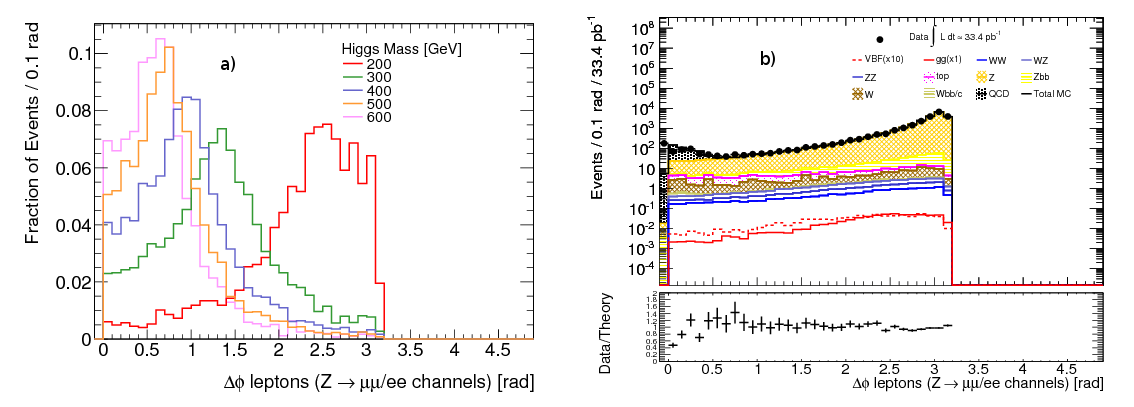

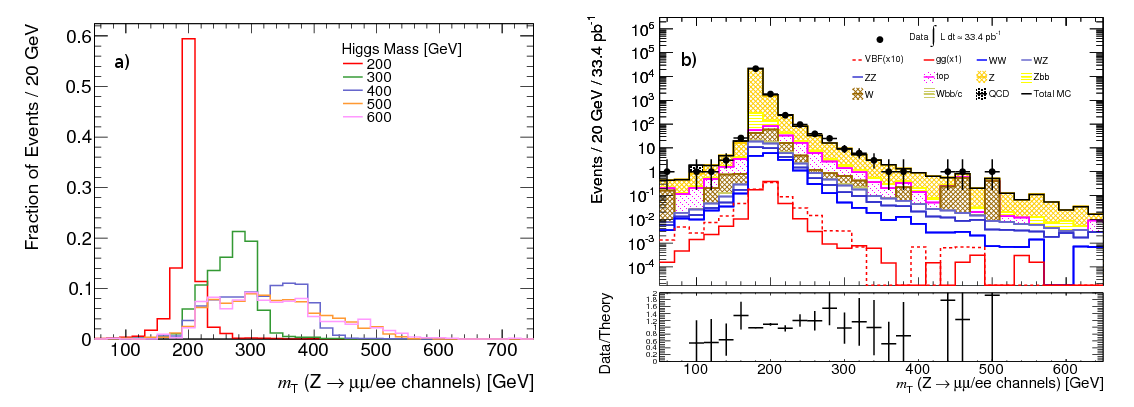

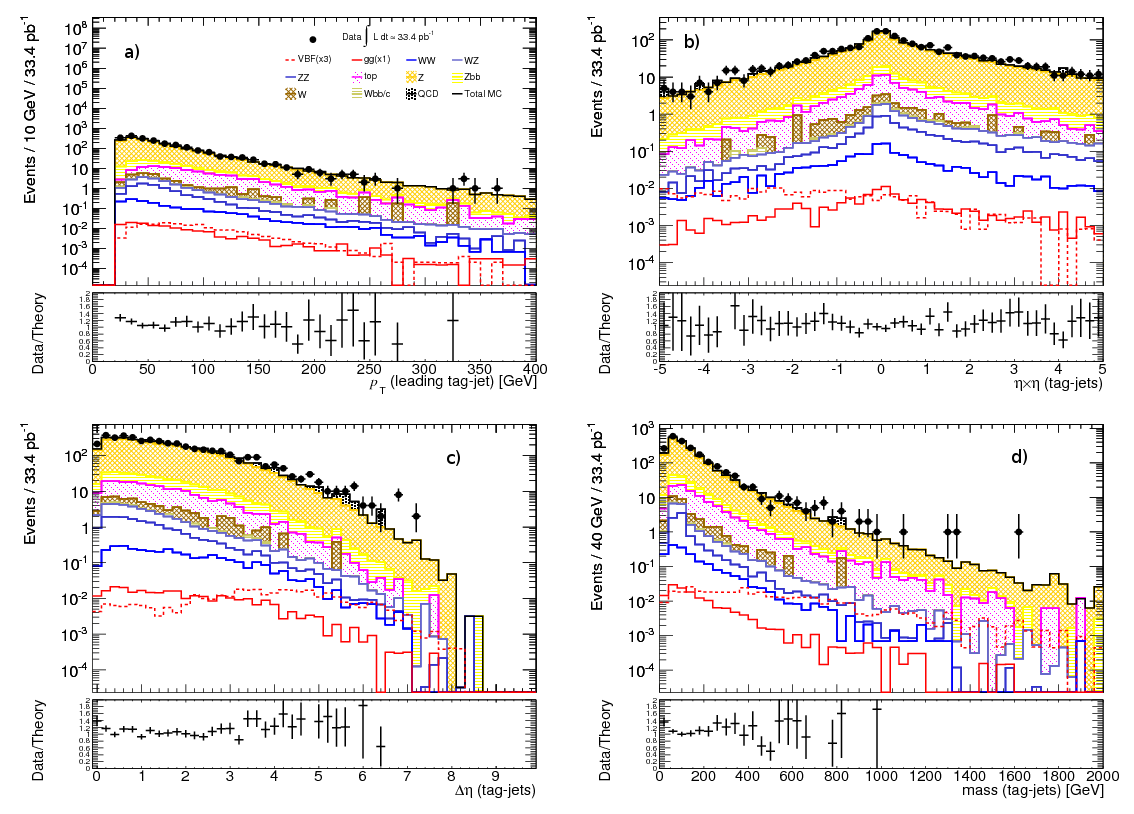

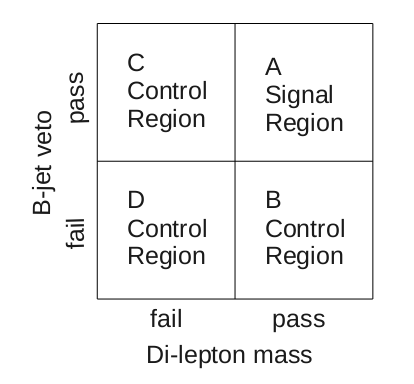

Feature Selection

In this section the cut-based selection used for the search for the SM Higgs boson in the \( \htollnunu \) channel produced by VBF is detailed. First the event pre-selection cuts are discussed and motivated. Subsequently discriminating variables associated with the Higgs decay products are described and a baseline set of cuts on these variables discussed. Next the variables useful to discriminate between signal and background that are associated to the VBF remnants are described. In each case the results of the baseline selections are summarised. In addition the effect of pile-up on each variable of interest is investigated and comparisons between data and Monte Carlo are made in order to ensure the variables used are well understood. For samples with an insufficient number of Monte Carlo events an attempt is made to estimate the contribution of these backgrounds.

Preselection

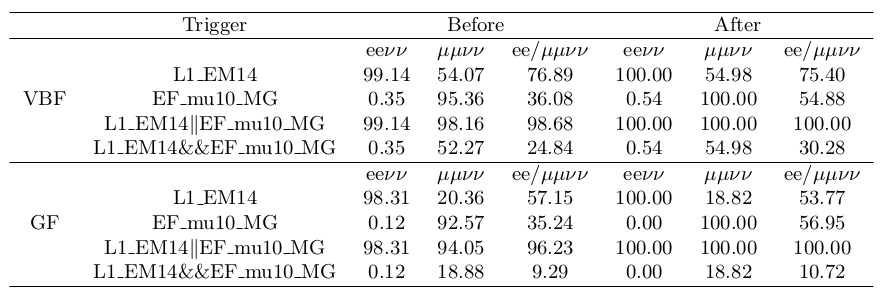

The following outlines the pre-selection cuts used. Half of the available Monte Carlo events is used so that cuts can be optimized on an independent sample. Firstly, at least one primary vertex with at least three associated tracks (\( \pt > \) 150 \( \Mc \)) is required.In order to be able to record signal events, this analysis relies on two single lepton triggers, designed to exploit the high \( \pt \) leptons in the signal. In the Monte Carlo samples used in this study, not all triggers used on the data were available in the ATHENA release used. In the electron channel (\( \ztoee \)) the L1_EM14 trigger is used while in the muon channel (\( \ztomm \)) the Event Filter chain EF_mu10_MG is used (same triggers used in pile-up Monte Carlo). These require an electron with transverse energy greater than 14 \( \GeV \) and a muon with a transverse momentum of 10 \( \Gc \) respectively. These triggers were shown to have comparable efficiency to those used in the data [19].

A comparison of the trigger efficiency of the VBF component of the signal compared to the GF signal, using the \( \mH \) = 200 \( \Gcs \) signal sample, for each combination of triggers used on the Monte Carlo before and after the offline selection of the lepton candidates is shown in Figure 23. The performance of the individual triggers is shown to be above 90 \( \% \) in both the electron and muon channels for each signal component before the lepton selection. After the selection of lepton candidates this rises to 100 \( \% \) in each case. This indicates that the use of single lepton triggers provides adequate performance for triggering both components of the signal. A certain level of overlap is shown to exist between triggers and channels although this appears to be much reduced with the muon trigger, where the muon trigger fires in the electron channel typically less than 1 \( \% \) of the time. In contrast, for the electron trigger this value is closer to 50 \( \% \), caused by the looser nature of the electron trigger employed.

Within the pre-selection cuts as expected the vertex cut has a small but similar effect on all samples, typically reducing sample statistics by less than 1 \( \% \). For all samples considered the electron trigger is more efficient than the muon trigger. This is expected because the electron trigger is a Level 1 trigger whereas the muon trigger is an Event Filter trigger and therefore places much stricter requirements on the muons being triggered. Typically, the trigger efficiency for samples with isolated electrons(muons) is around 60(40) \( \% \) respectively. For samples with non-isolated leptons, the triggering efficiencies are much lower at less than 30(20) \( \% \) for electron(muons).

Figure 23: Trigger selection efficiencies (\( \% \)) for different combinations of electron (L1_EM14) and muon (EF_mu10_MG) triggers for VBF (upper section) and GF only (lower section) components of the \( \mH \) = 200 \( \Gcs \htollnunu \) signal sample. Efficiencies are given for both the generated sample and the sub-sample containing two reconstructed electrons or muons passing the selection requirements. In each case this is broken down into H \( \rightarrow \) ee \( \nu\nu \) , \( \mu\mu\nu\nu \) ,ee \( +\mu\mu\nu\nu \) events using truth information.

Selection based on Higgs decay products

In the following the variables that provide good discrimination between signal and background that are related to the decay products of the Higgs boson are motivated and a baseline selection adopted. This selection is based on the work performed in an analysis targeting the GF component of the signal [19] [20], which was used for validation purposes. Cut variables identified in this work were investigated in the context of the VBF signal and cuts changed appropriately. Comparisons are made with data and pile-up Monte Carlo reweighted to show similar levels of pile-up as that in the data analysed. By this procedure any regions where there are discrepancies between the nominal non-pile-up Monte Carlo and/or the data and pile-up reweighted Monte Carlo are avoided in order to try to maximise robustness of the analysis.

In order to correct the pile-up Monte Carlo to reflect the level of pile-up seen in the data an event reweighting procedure is performed on the pile-up Monte Carlo samples. In this procedure, the number of primary vertices in the event with three or more tracks is plotted after the di-lepton mass window cut (which is motivated later in this section) for the total background using the pile-up Monte Carlo and the data [19]. With each distribution normalised to unity, event weights as a function of the number of primary vertices were derived by taking the ratio of data over Monte Carlo for each bin. A much improved agreement between data and pile-up Monte Carlo is achieved when this Monte Carlo has been reweighted.

Lepton Selection

Typically backgrounds with no real leptons coming from the hard process will produce fake leptons which have a softer \( \pt \) distribution. For backgrounds with real leptons the \( \pt \) hardness is not much different to the signal. In this sense the lepton \( \pt \) cut serves to act to make sure the leptons selected are of good quality. This will allow further suppression of backgrounds without real leptons such as QCD. As detailed in the section Electrons used in this analysis, electrons used in this study are required to have \( \et > \) 20 \( \GeV \) and muons are required to have \( \pt > \) 20 \( \Gc \).

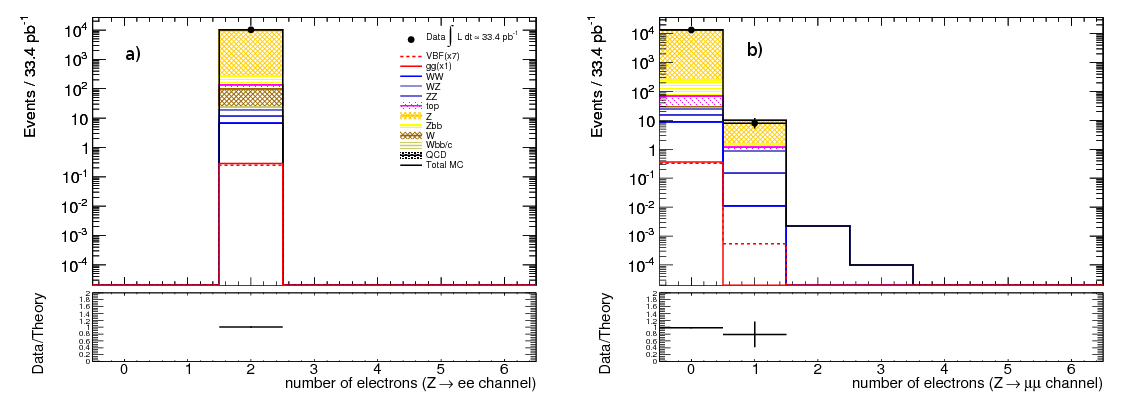

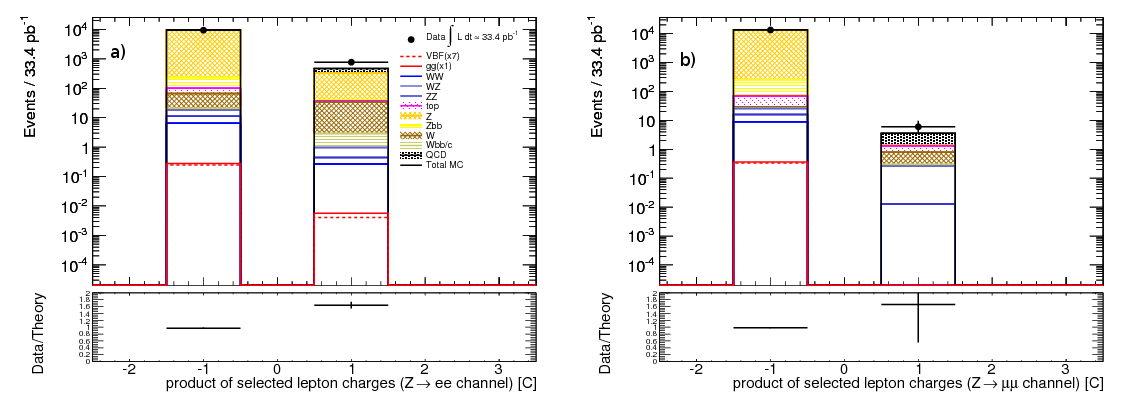

The presence of two leptons from the leptonically decaying Z boson in the signal topology allows for efficient rejection of backgrounds without isolated leptons, namely QCD. The distributions of the electron and muon multiplicities for events with either two electrons (corresponding to \( \ztoee \) in signal) or two muons ( \( \ztomm \) in signal) satisfying the criteria outlined in the section Physics Objects are shown in Figure 24. By requiring exactly two electrons or two muons and no leptons of any other type allows for rejection of some background, particularly the di-boson background. From now on \( \ztoee \) will be used to refer to events with exactly two electrons and no muons and \( \ztomm \) to events with exactly two muons. In the case of \( \ztomm \) in order to maintain good muon identification, at least one muon is required to be combined. It is possible the contamination of electrons in the \( \ztomm \) channel in the VBF signal is due to mis-identified electrons.

Figure 24: Distribution of electron multiplicity in events with a) two electrons ( \( \ztoee \) ) or b) two muons ( \( \ztomm \) ). Signal corresponds to the \( \mH \) = 200 \( \Gcs \) sample and the contribution from each background (stacked) is shown.